Table of Contents |

guest 2026-03-19 |

Sample Manager

Release Notes: Sample Manager

Release Notes: LabKey LIMS

Release Notes: Biologics LIMS

Sample Manager & LIMS Validated

Get Started with Sample Manager

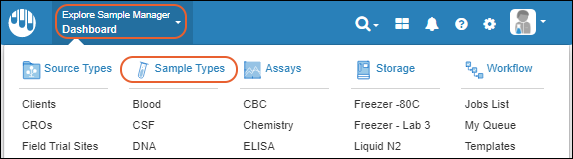

Sample Manager Dashboard

Folder Organization

Cross-Folder Actions

User Accounts, Groups, and Roles

My Account

Permission Roles

Manage Groups

Manage Notifications

Sample Manager - FAQ

Request Support

Contact Us

Explore Sample Manager

Ontology Concept Annotations

Help Links

Click to Explore

Samples

Create a Sample Type

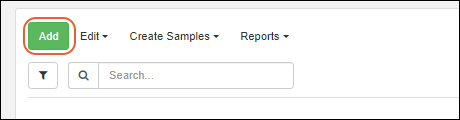

Add Samples

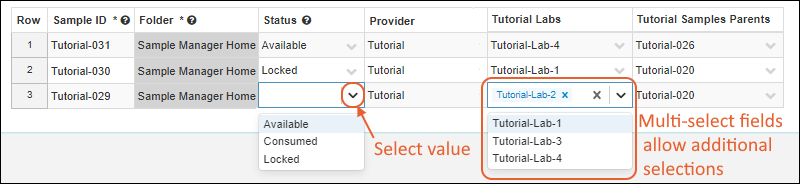

Create Samples from Grid

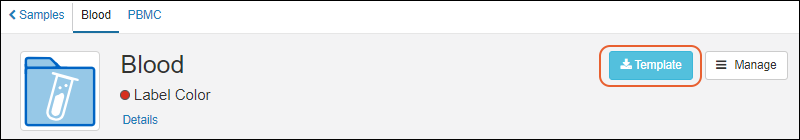

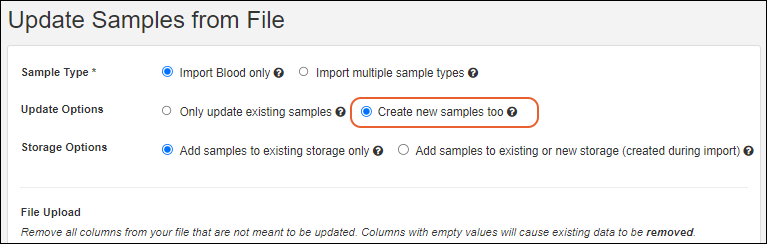

Import Samples from File

Sample ID Naming

Aliquot Naming Patterns

ID and Name Settings

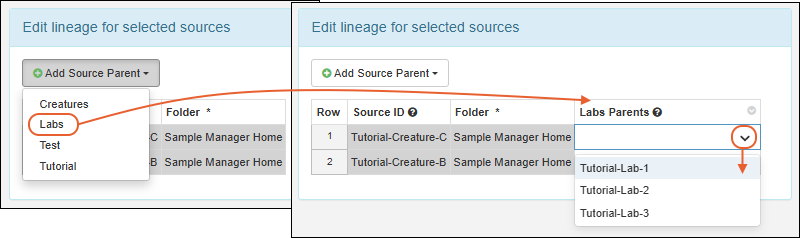

Sample Lineage / Parentage

Aliquots, Derivatives, and Sample Pooling

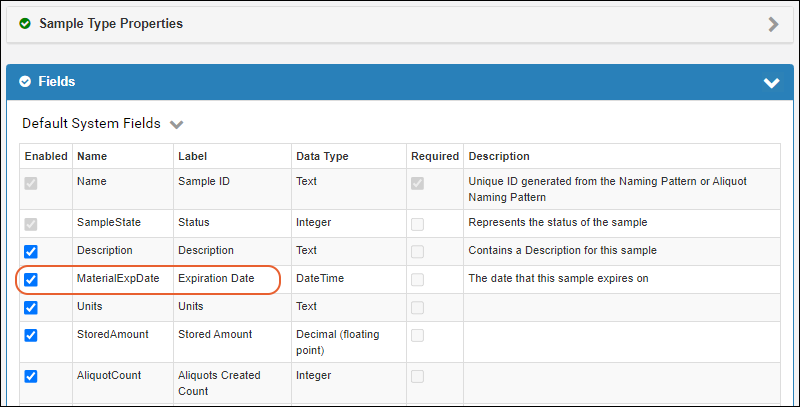

Sample Expiration

Sample Timeline

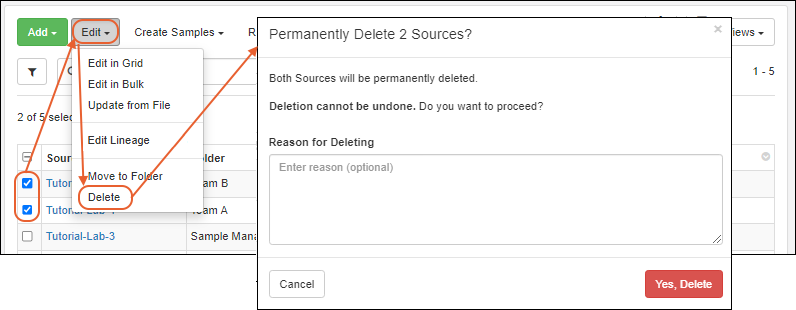

Manage Samples

Manage Sample Status

Edit Selected Samples

Sample Finder

Built-in Sample Reports

Sample Search

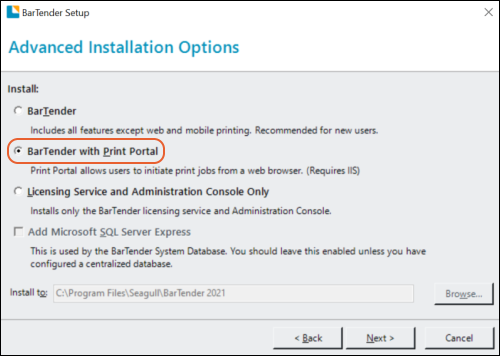

Print Labels with BarTender

BarTender Integration

Barcode Fields

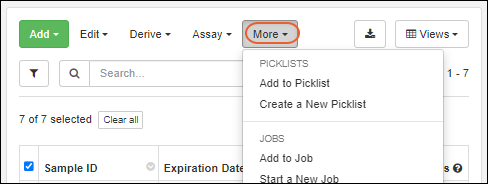

Sample Picklists

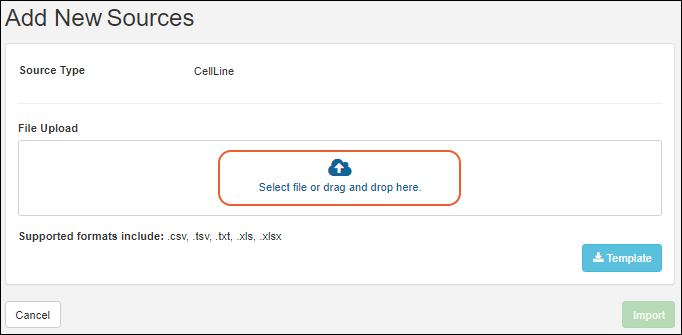

Sources

Create Sources

Associate Samples with Sources

Manage Sources

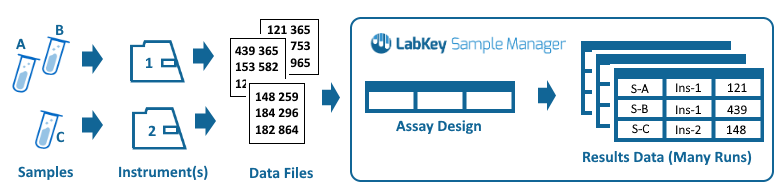

Experiment / Assay Data

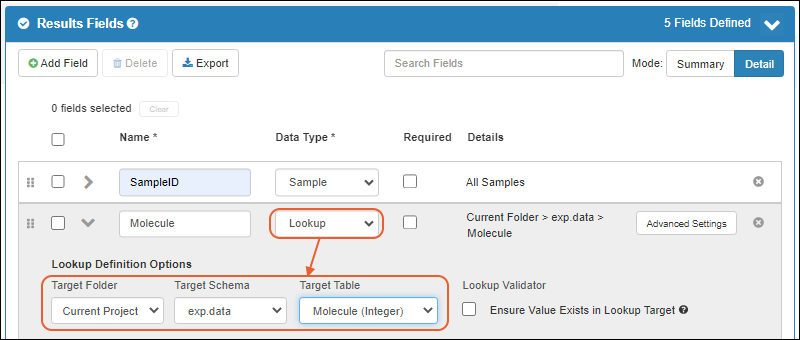

Describe Assay Data Structure

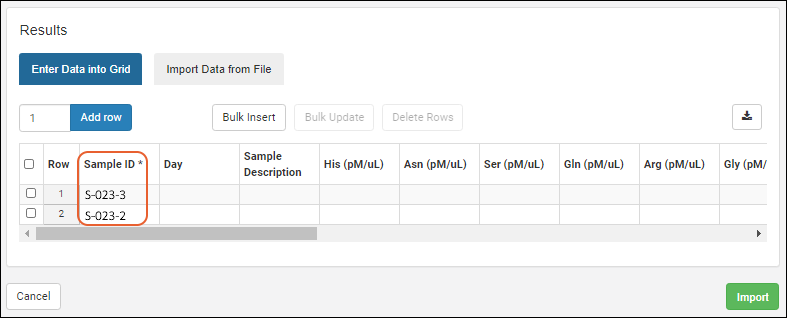

Import Assay Data

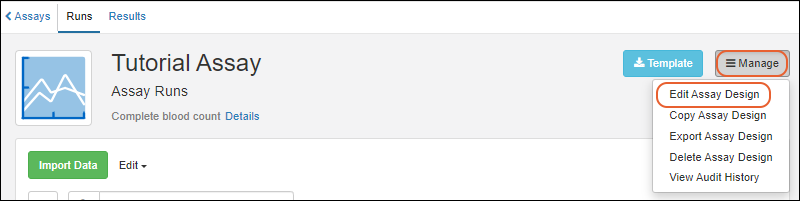

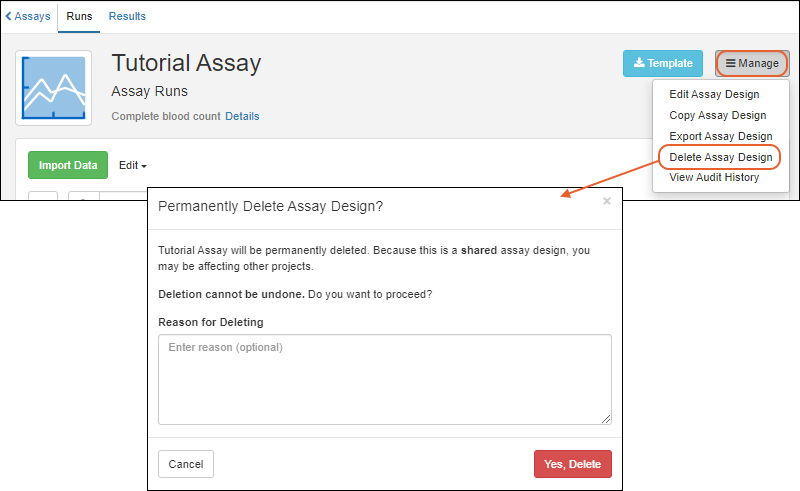

Manage Assay Designs

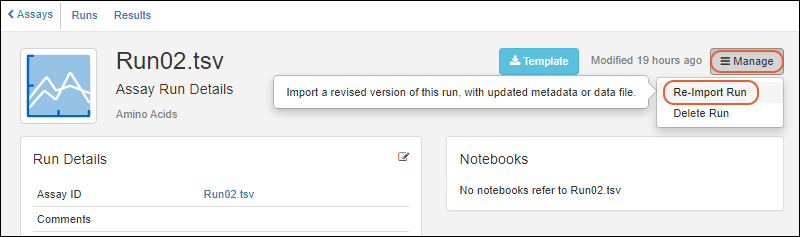

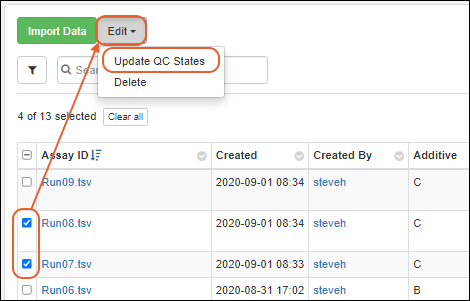

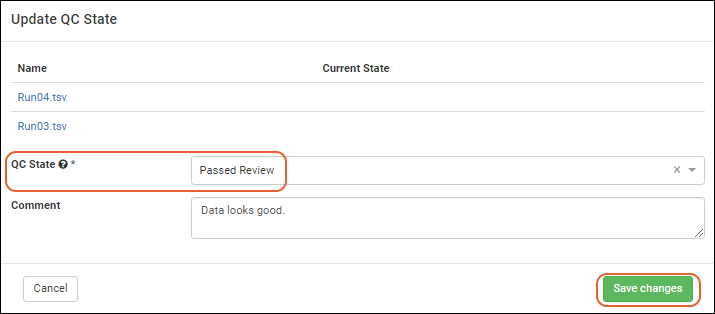

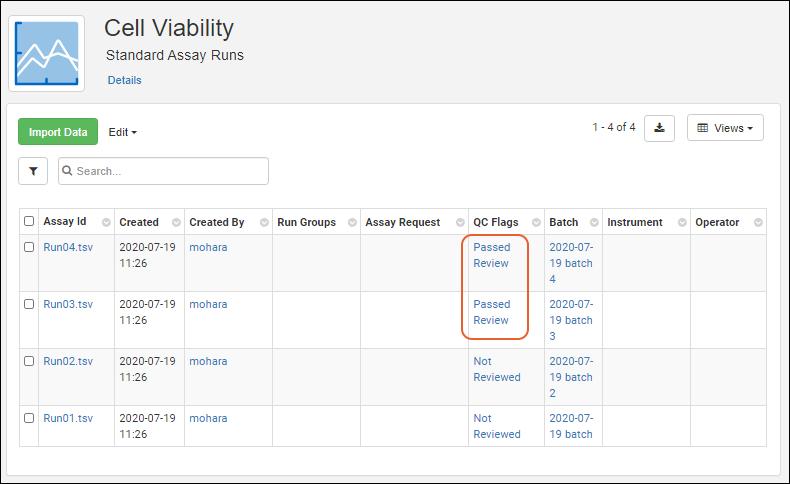

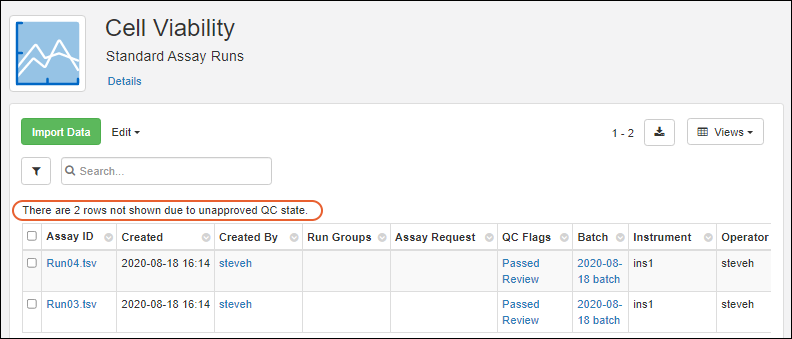

Manage Assay Data

Storage Management

Create Storage

Store Samples

Manage Storage

Manage Storage Unit Types

View Storage Details

Edit Storage Definition

Manage Storage Locations

Move Stored Samples

Manage Stored Samples

Storage Roles

Check Out and Check In

View Storage Activity

Migrate Storage Data into LabKey

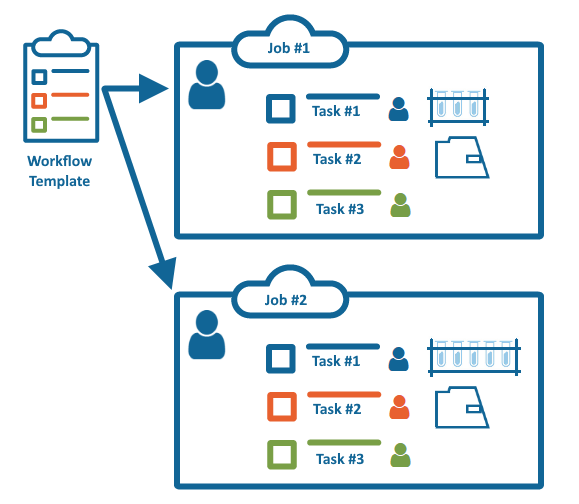

Workflow

Start a New Job

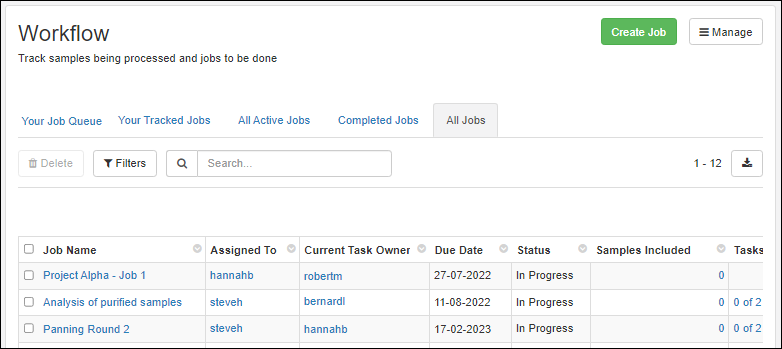

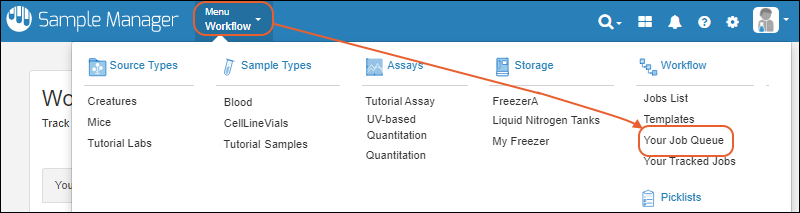

Manage Job Queue

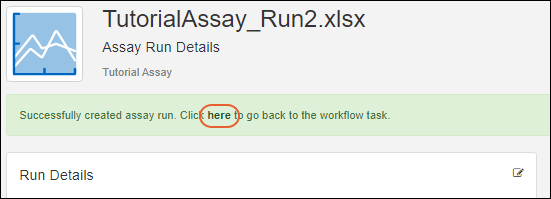

Complete Tasks

Edit Jobs and Tasks

Create a Job Template

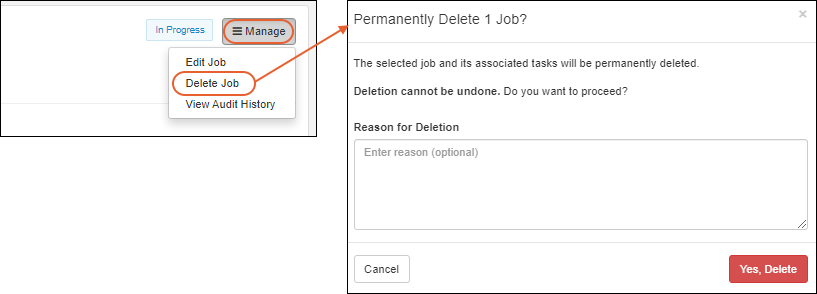

Manage Jobs and Templates

Electronic Lab Notebooks (ELN)

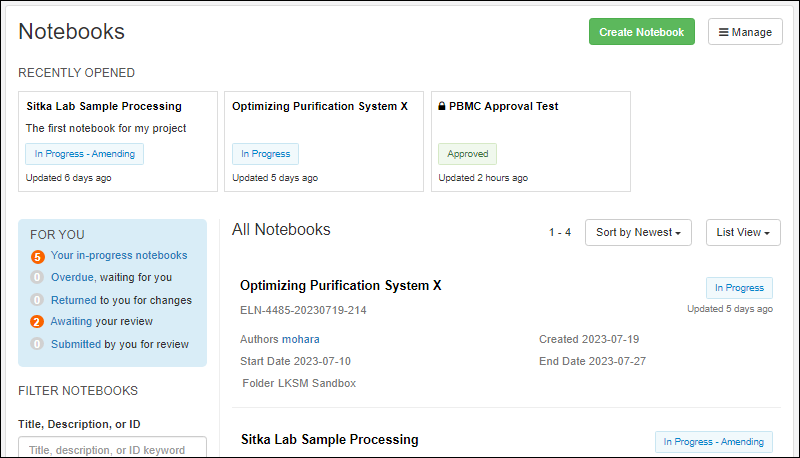

Notebook Dashboard

Author a Notebook

Notebook References

Notebook ID Generation

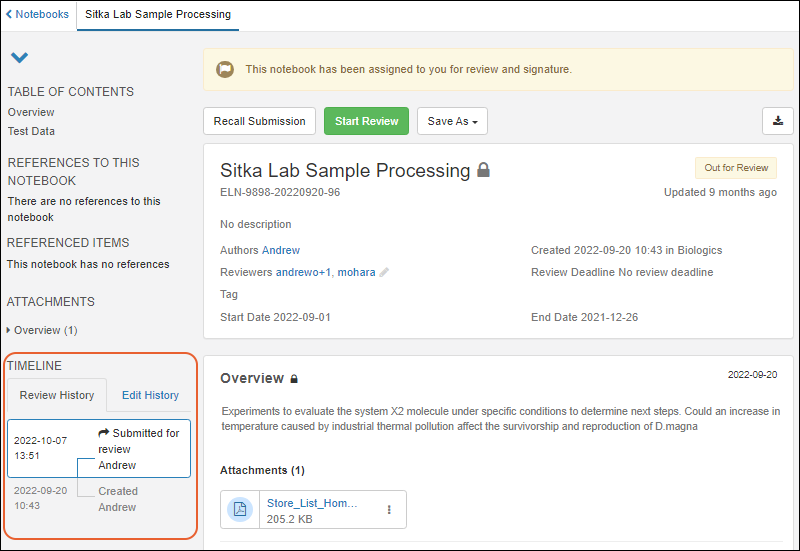

Notebook Review

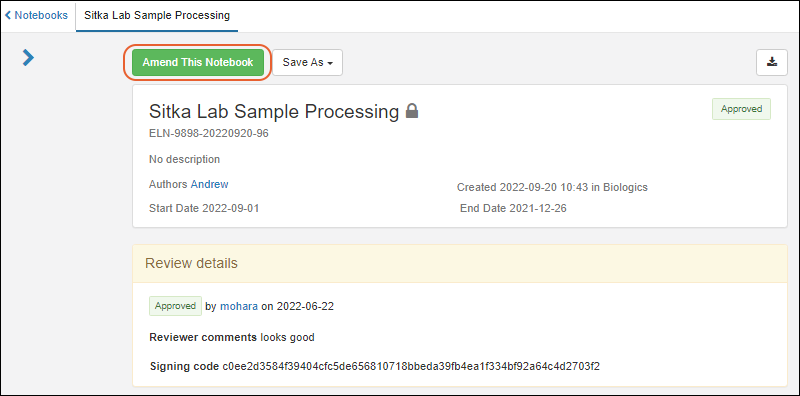

Notebook Amendments

Notebook Templates

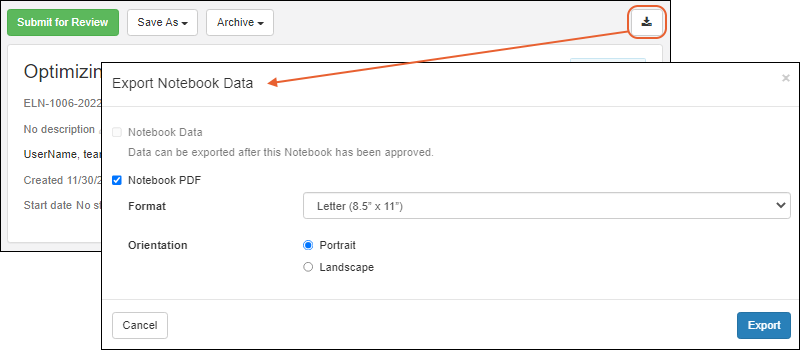

Export Notebook

Configure Puppeteer

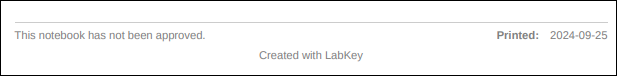

Troubleshoot ELN Images (Guide)

Troubleshooting ELN Images

ELN: Frequently Asked Questions

Data Resources

Use Sample Manager with LabKey Server

Use Sample Manager with Studies

Data Grid Basics

Data Import Guidelines

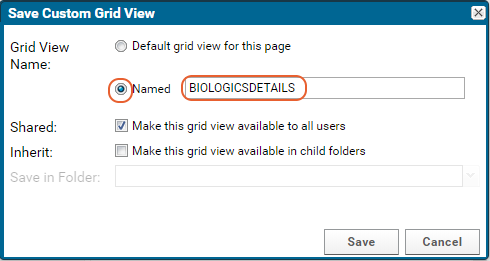

Custom Grid Views

Identifying Fields

Audit History

Search

Field Editor

Field Properties Reference

Attach Images and Other Files

URL Field Property

Date, Time, and Number Formats

String Expression Format Functions

LabKey SQL Syntax

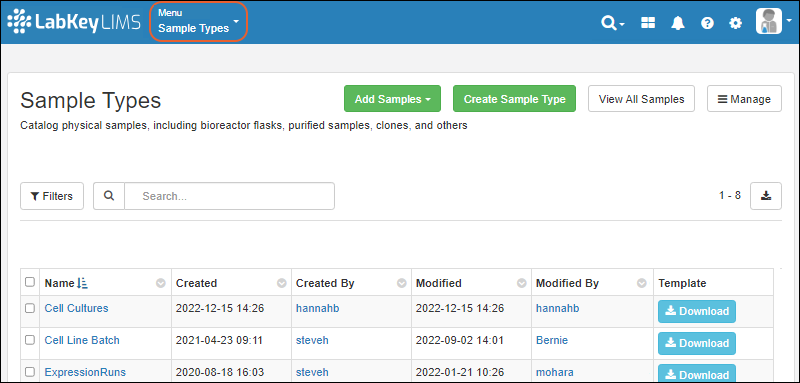

LabKey LIMS

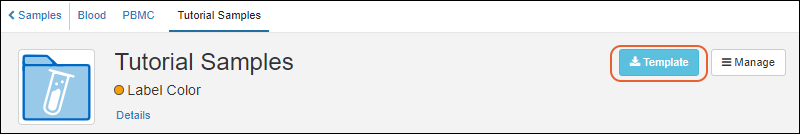

LIMS: Downloadable Templates

LIMS: Samples

Print Labels with BarTender

LIMS: Assay Data

LIMS: Charts

LIMS: Storage Management

LIMS: Workflow

Biologics LIMS

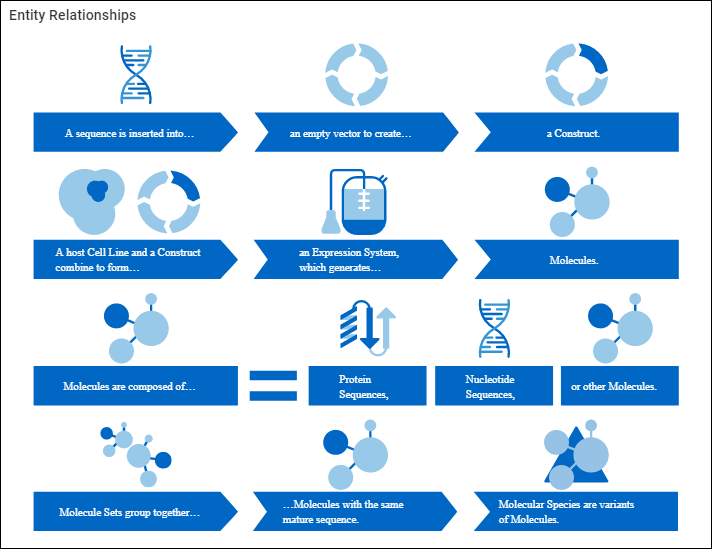

Introduction to LabKey Biologics

Release Notes: Biologics

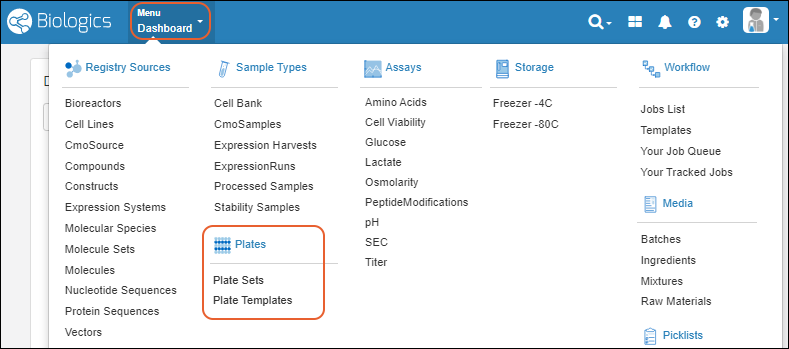

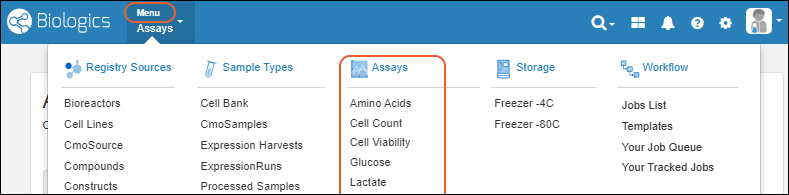

Biologics: Navigate

Biologics: Projects and Folders

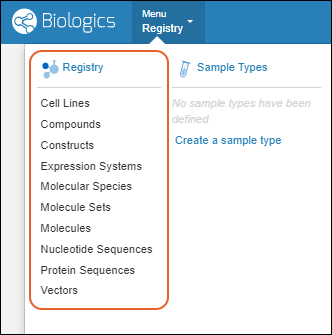

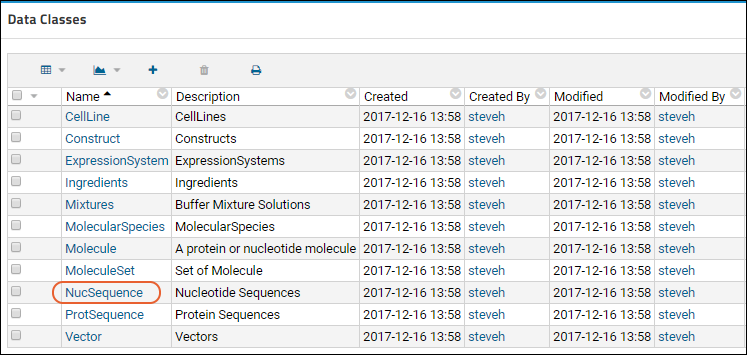

Biologics: Bioregistry

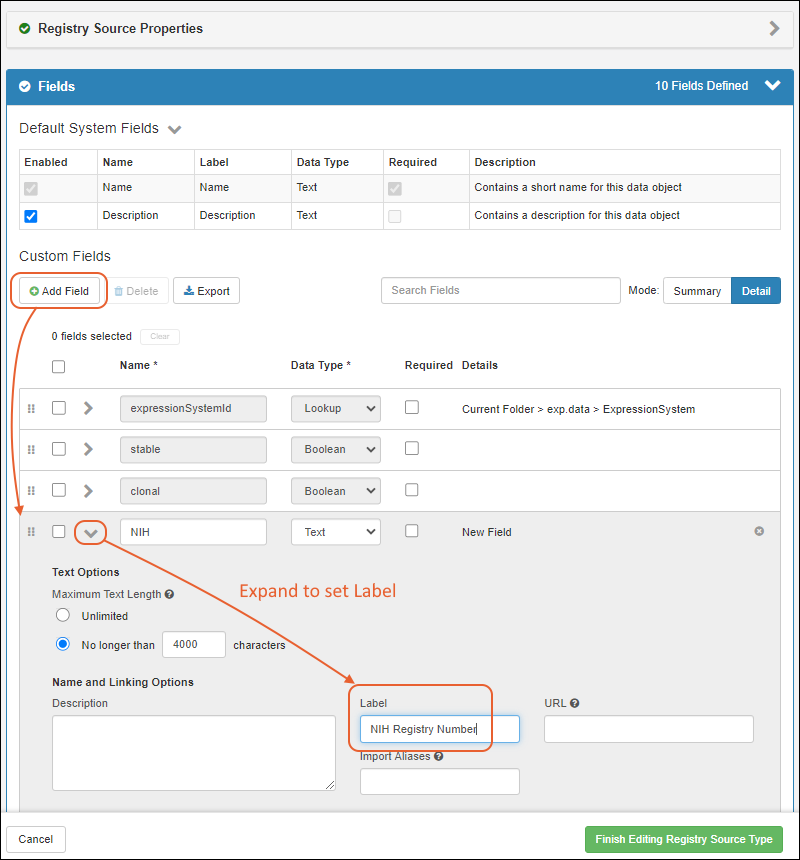

Create Registry Sources

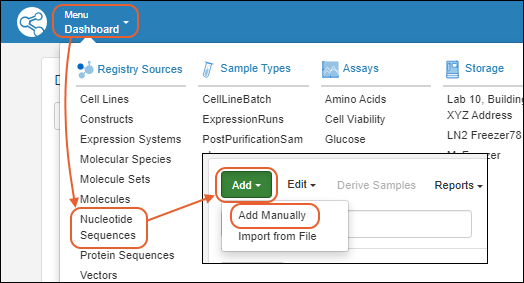

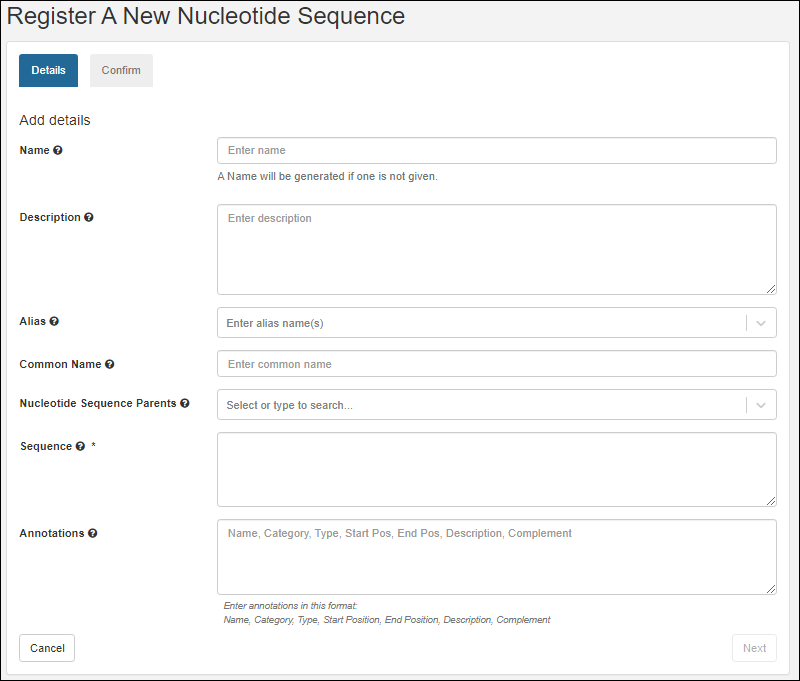

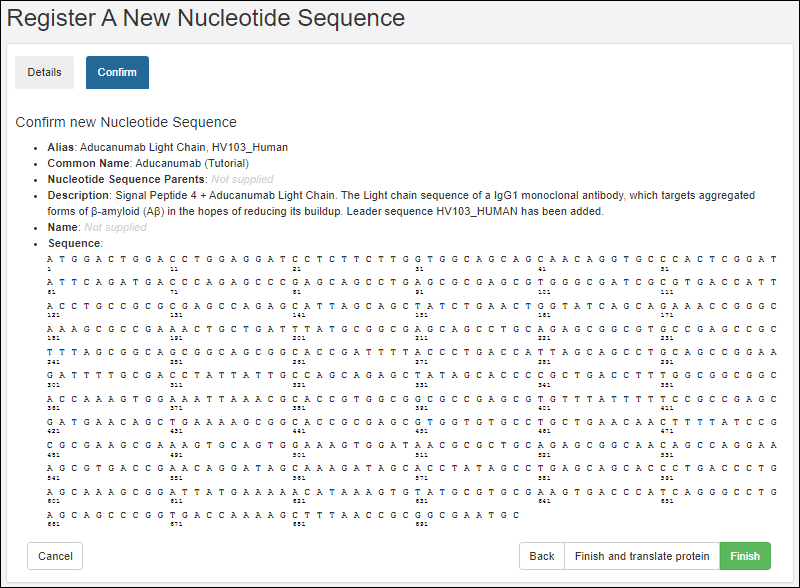

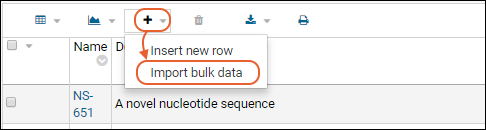

Register Nucleotide Sequences

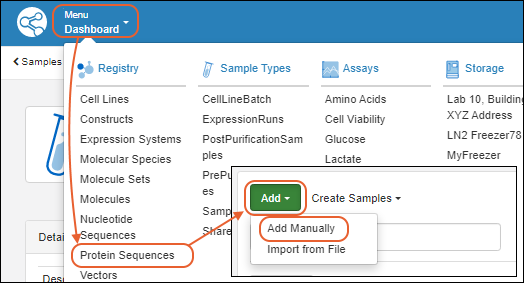

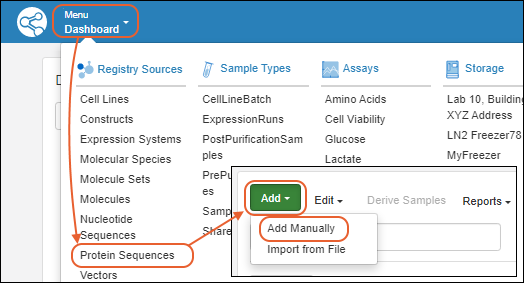

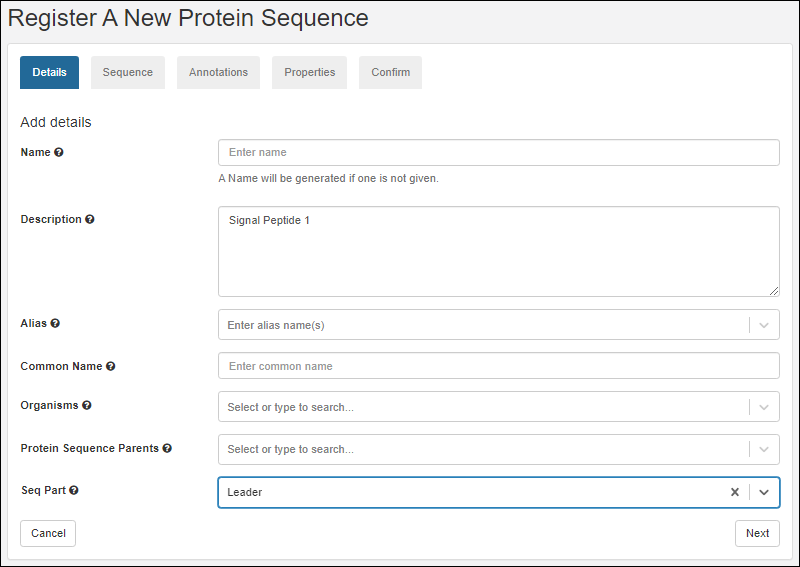

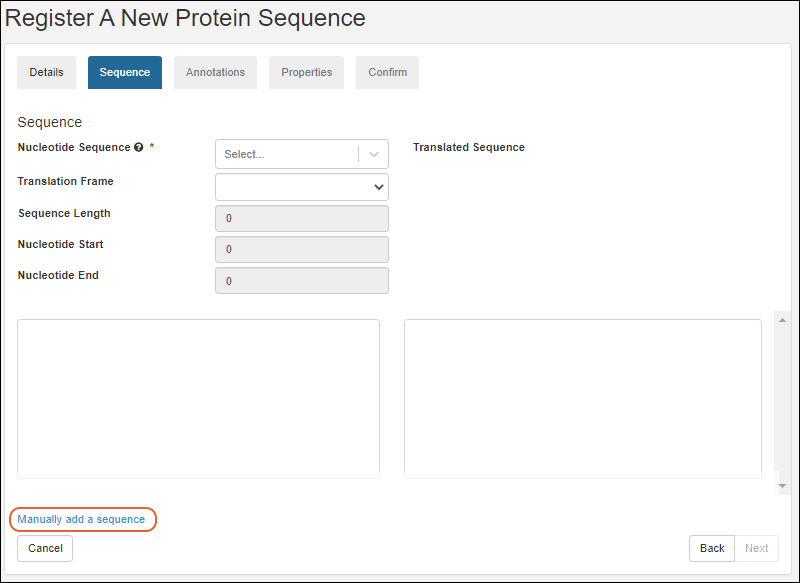

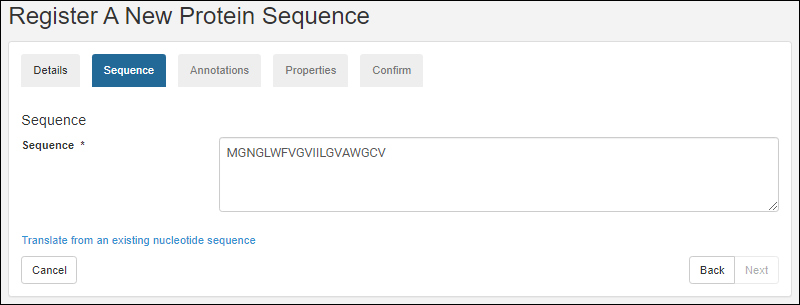

Register Protein Sequences

Register Leaders, Linkers, and Tags

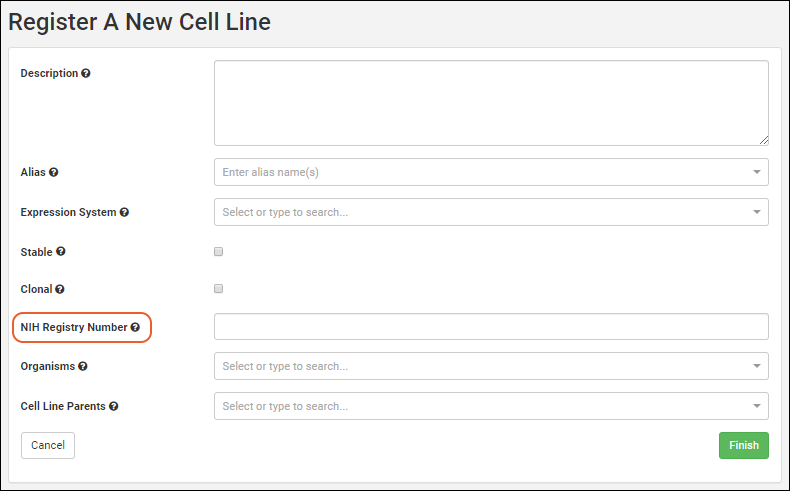

Vectors, Constructs, Cell Lines, and Expression Systems

Registry Reclassification

Biologics: Terminology

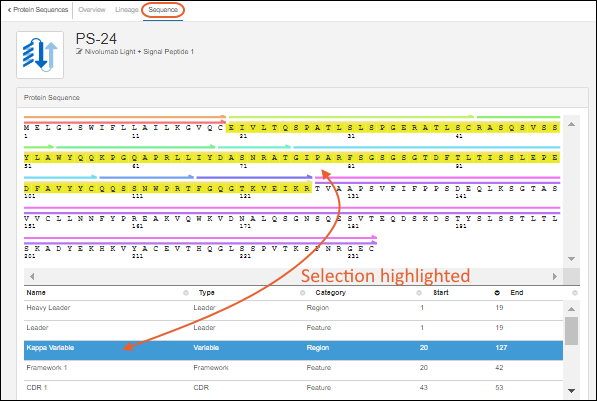

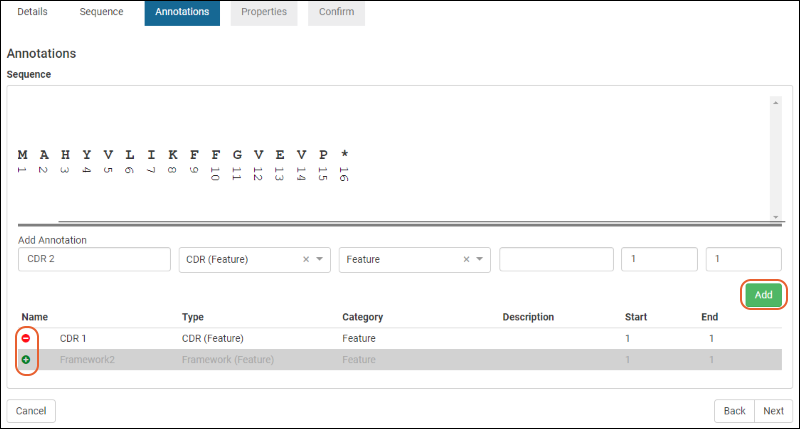

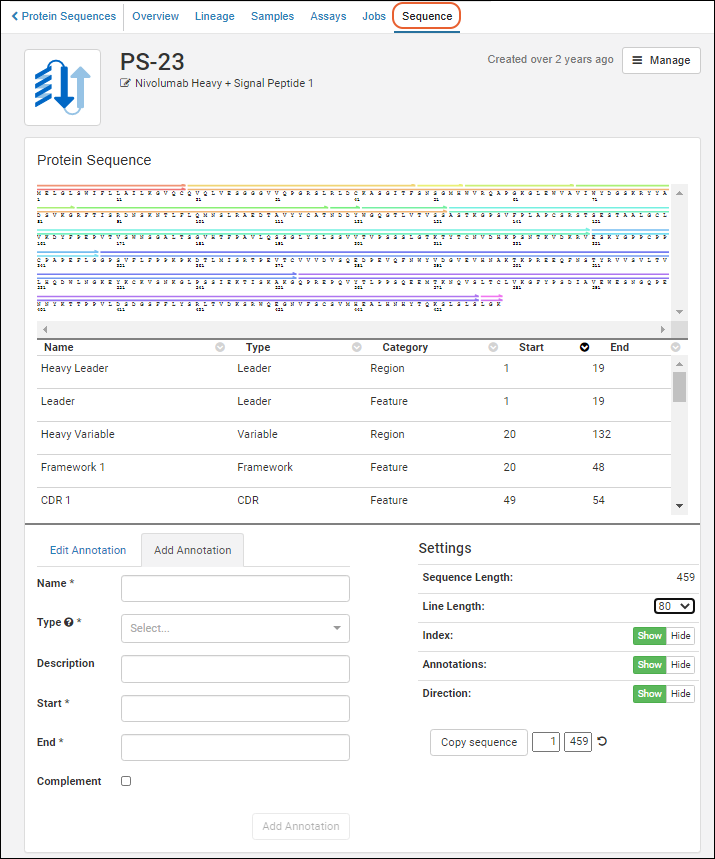

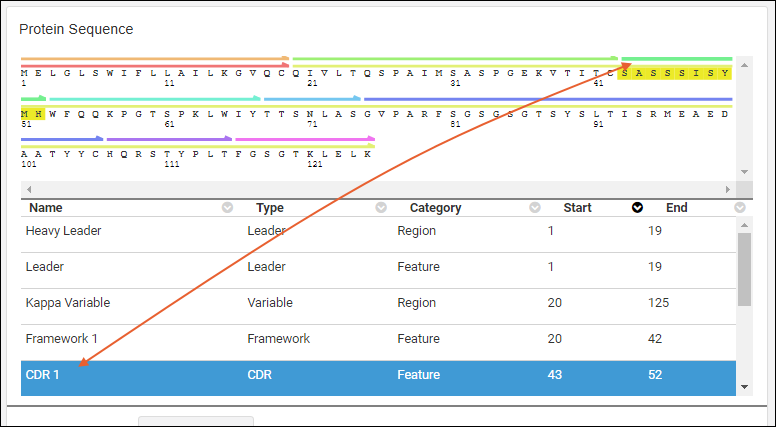

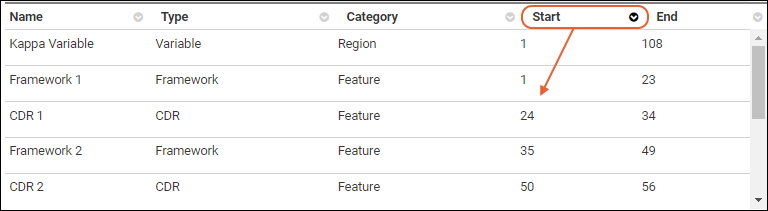

Protein Sequence Annotations

CoreAb Sequence Classification

Biologics: Chain and Structure Formats

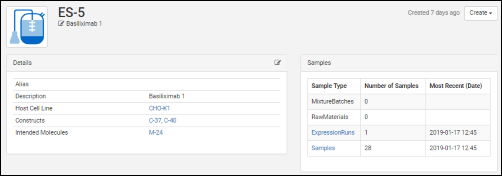

Molecules, Sets, and Molecular Species

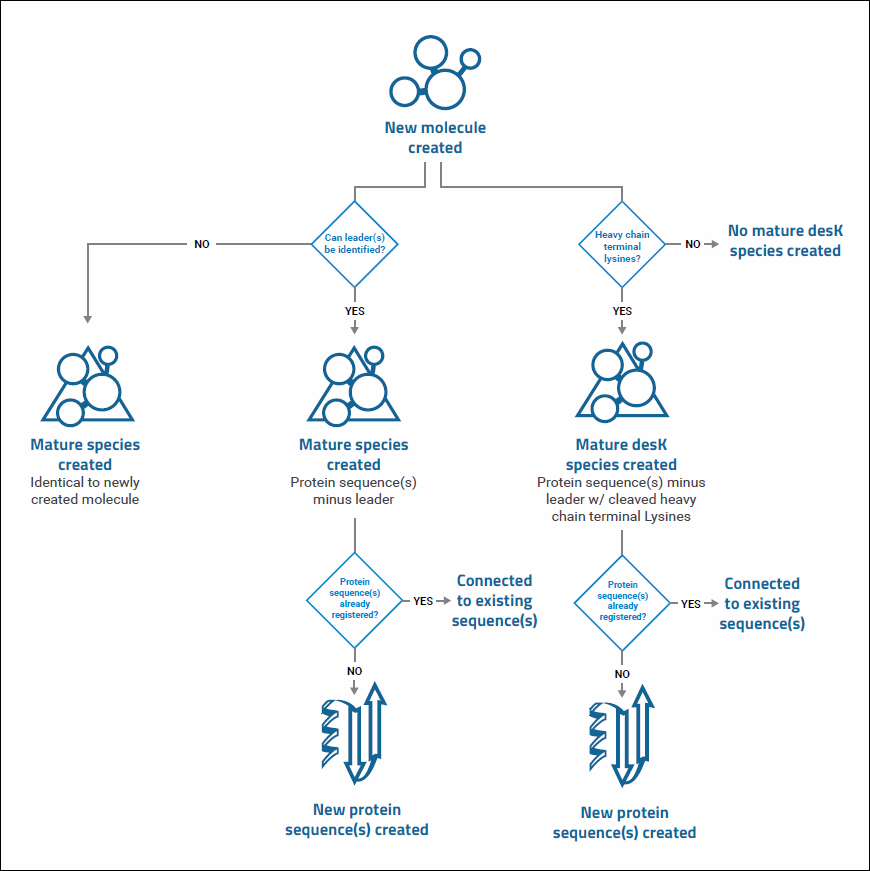

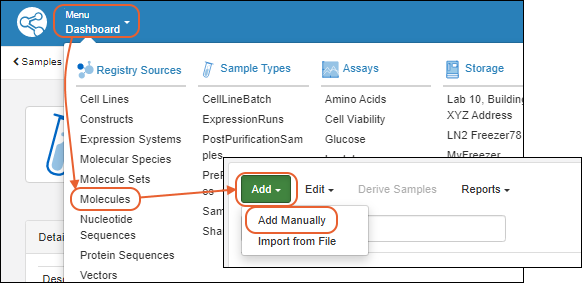

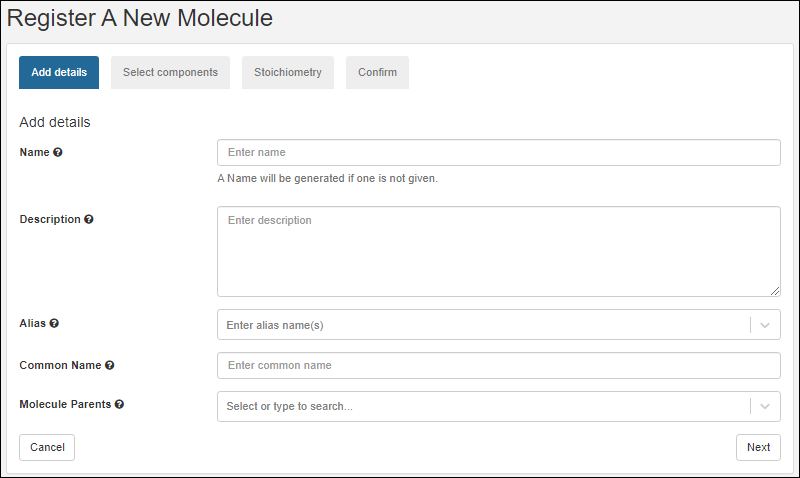

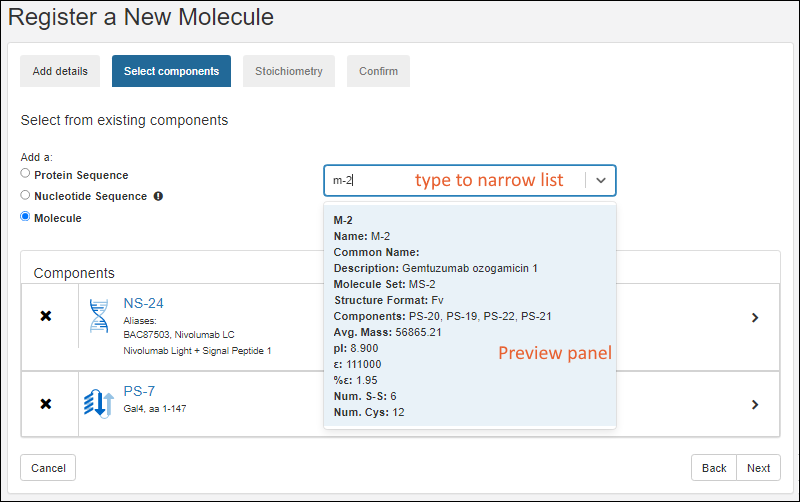

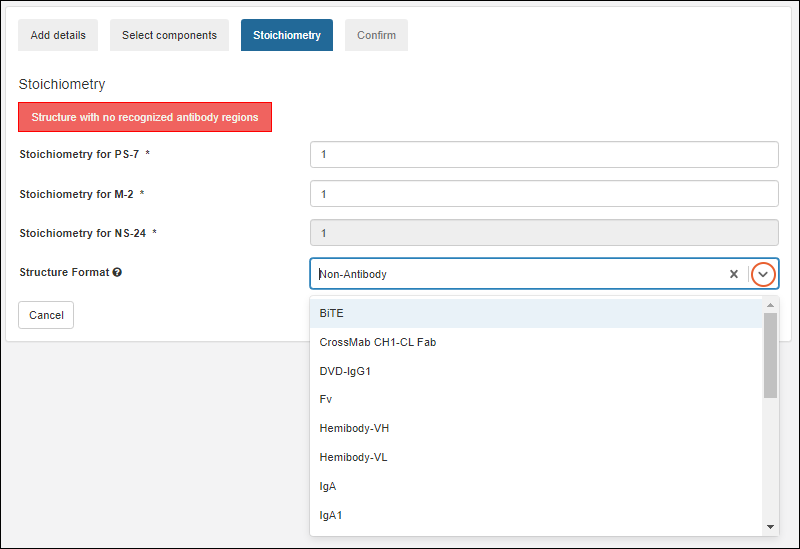

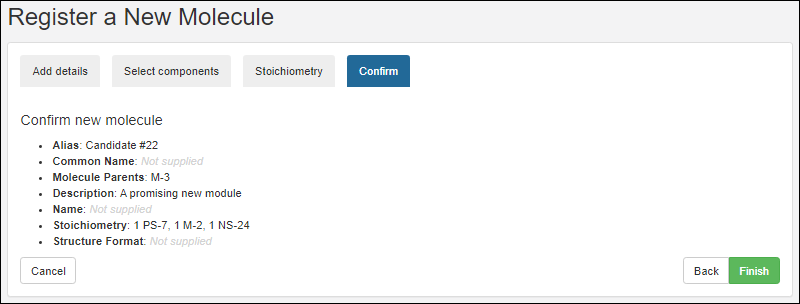

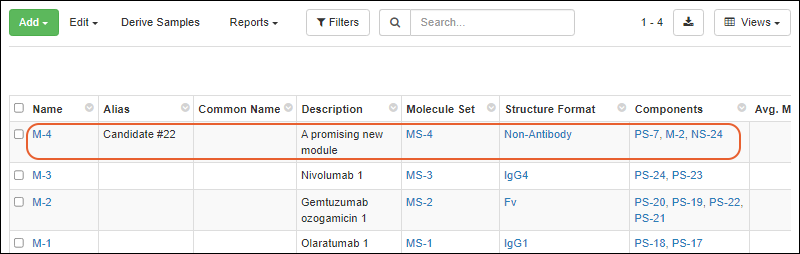

Register Molecules

Molecular Physical Property Calculator

Compounds and SMILES Lookups

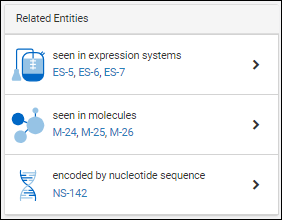

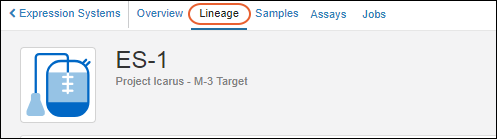

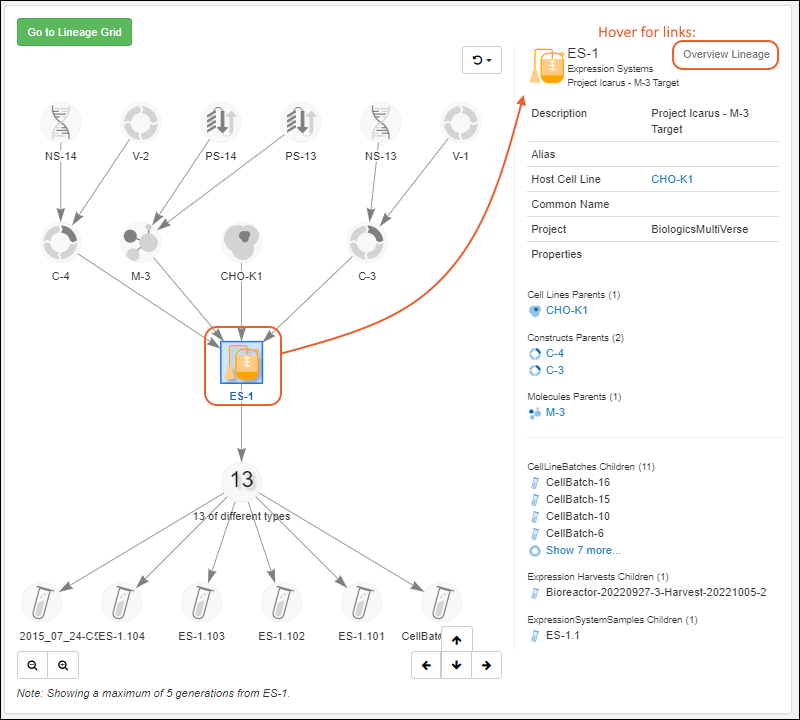

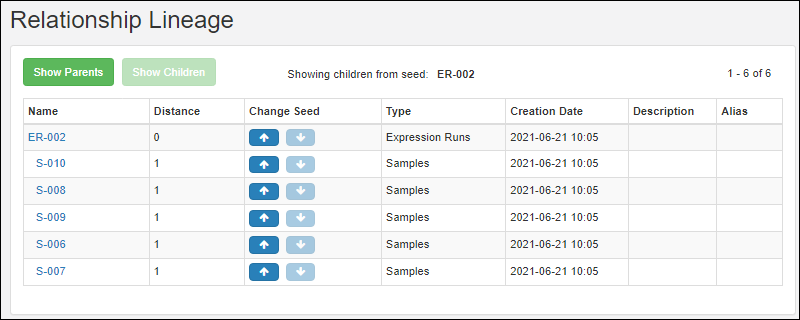

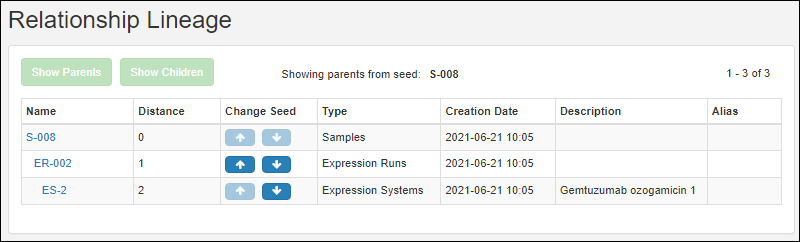

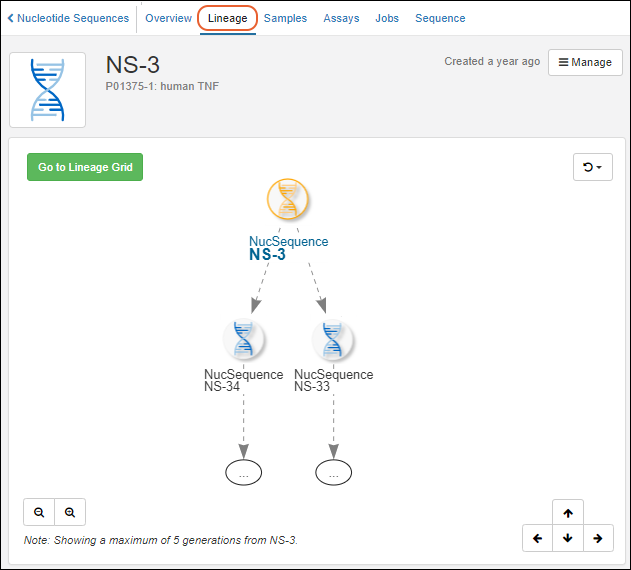

Entity Lineage

Customize the Bioregistry

Bulk Registration of Entities

Use the Registry API

Biologics: Plates

Biologics: Assay Data

Biologics: Specialty Assays

Biologics: Assay Integration

Biologics: Upload Assay Data

Biologics: Assay Batches and QC

Biologics: Media Registration

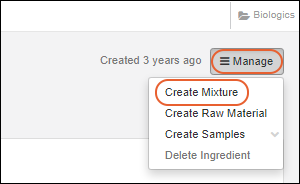

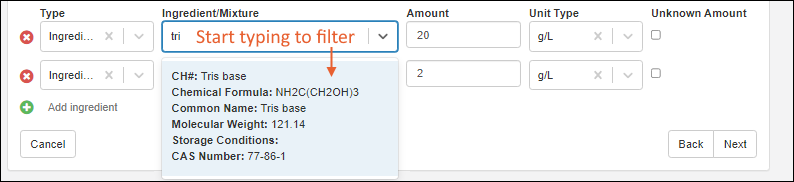

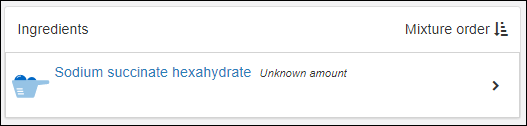

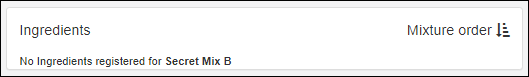

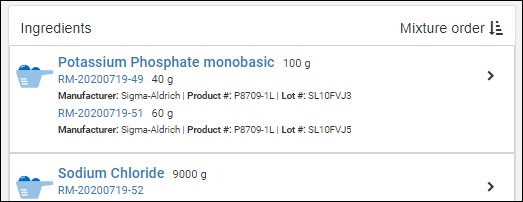

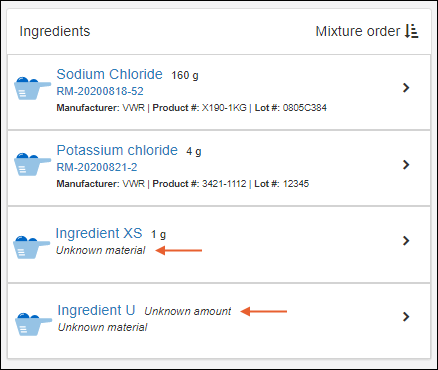

Managing Ingredients and Raw Materials

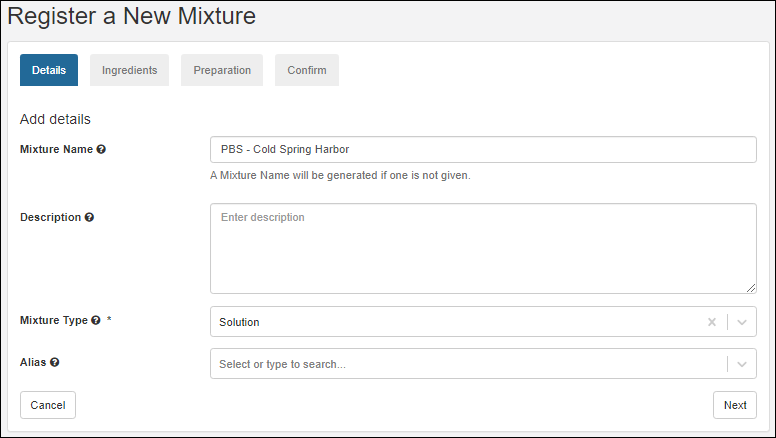

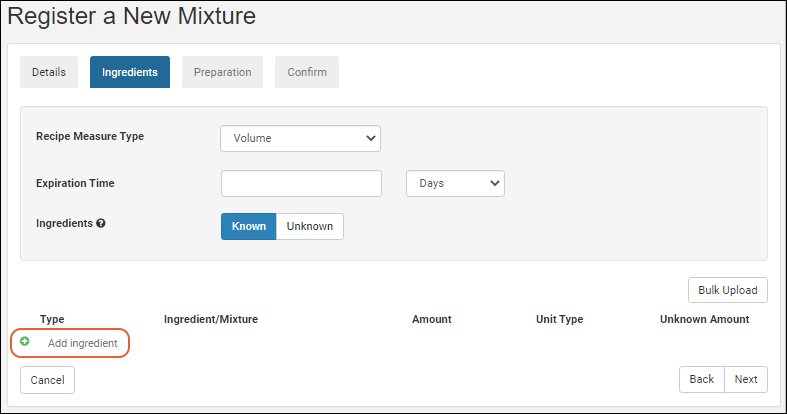

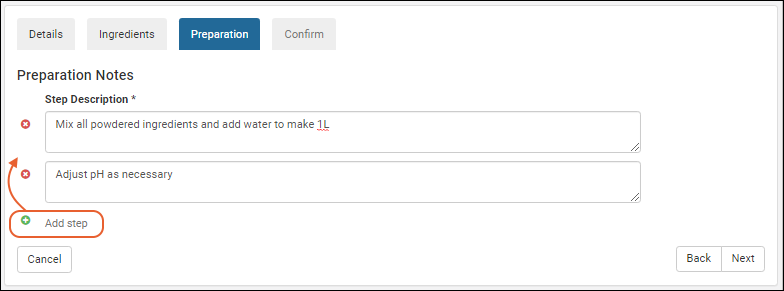

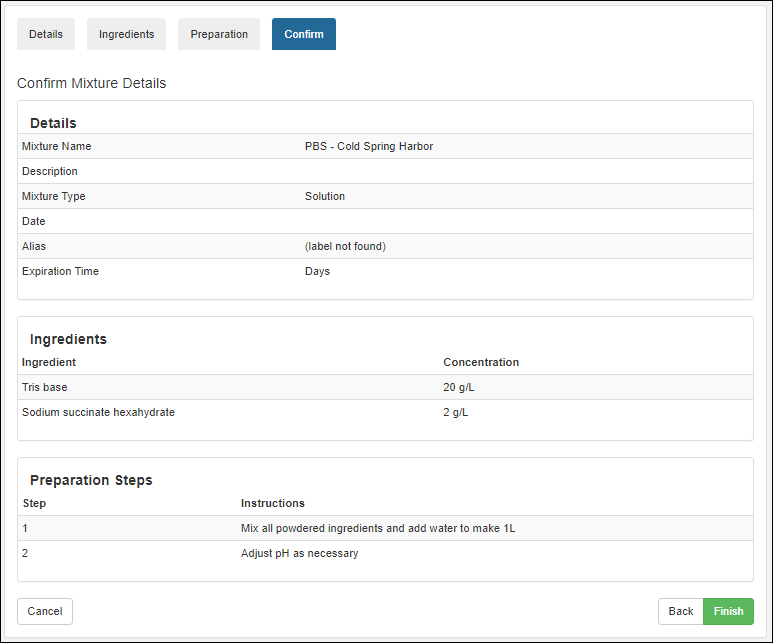

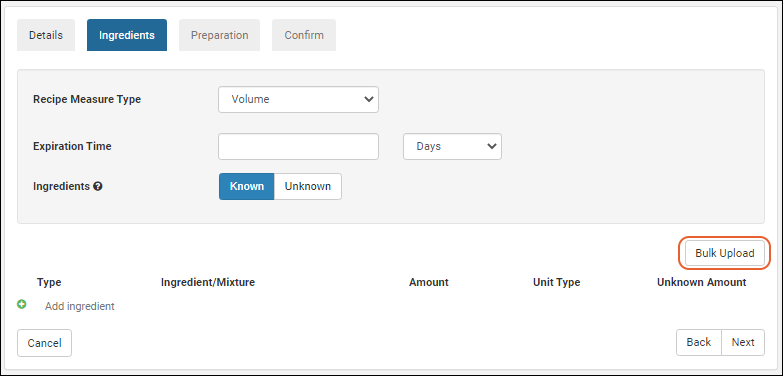

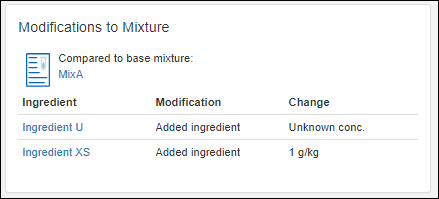

Registering Mixtures (Recipes)

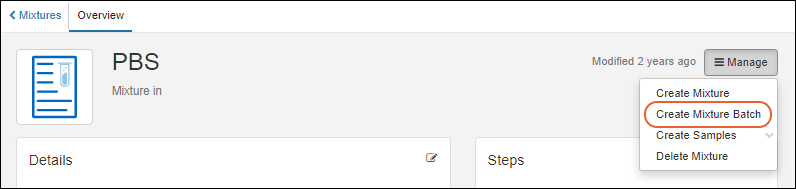

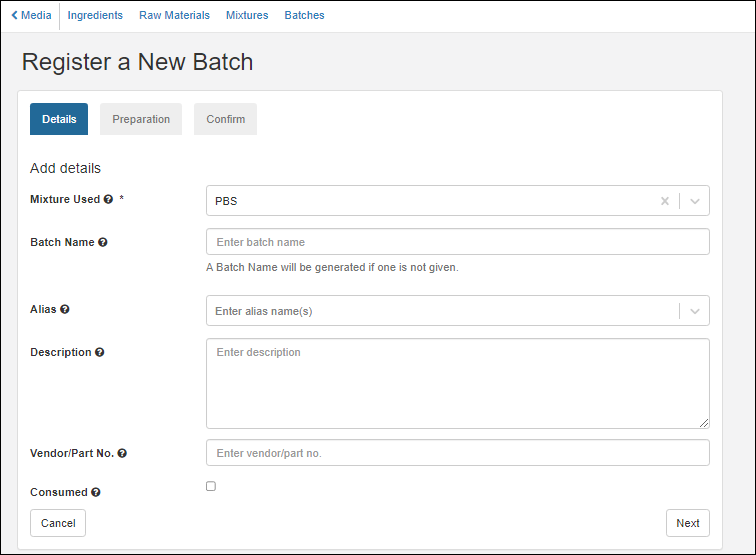

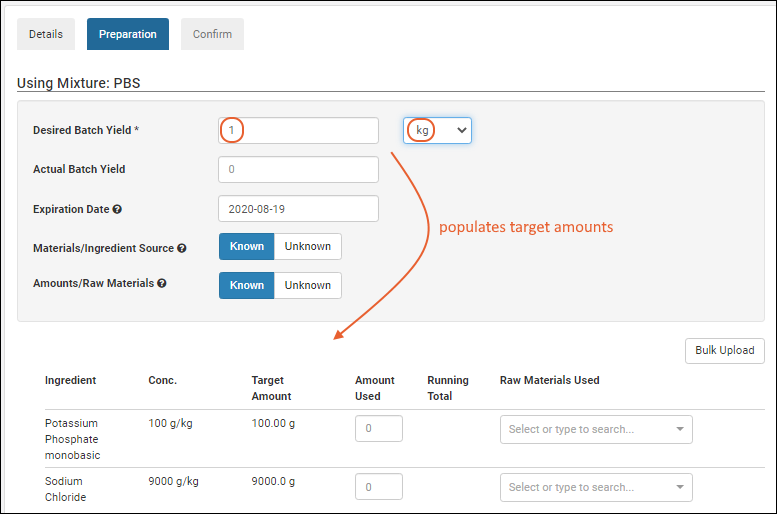

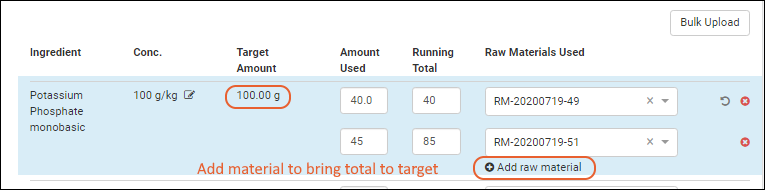

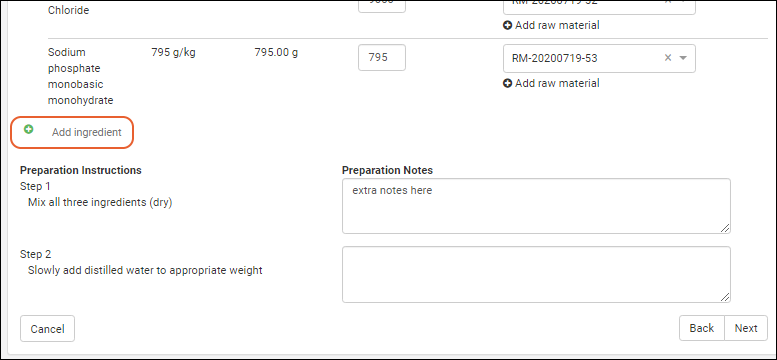

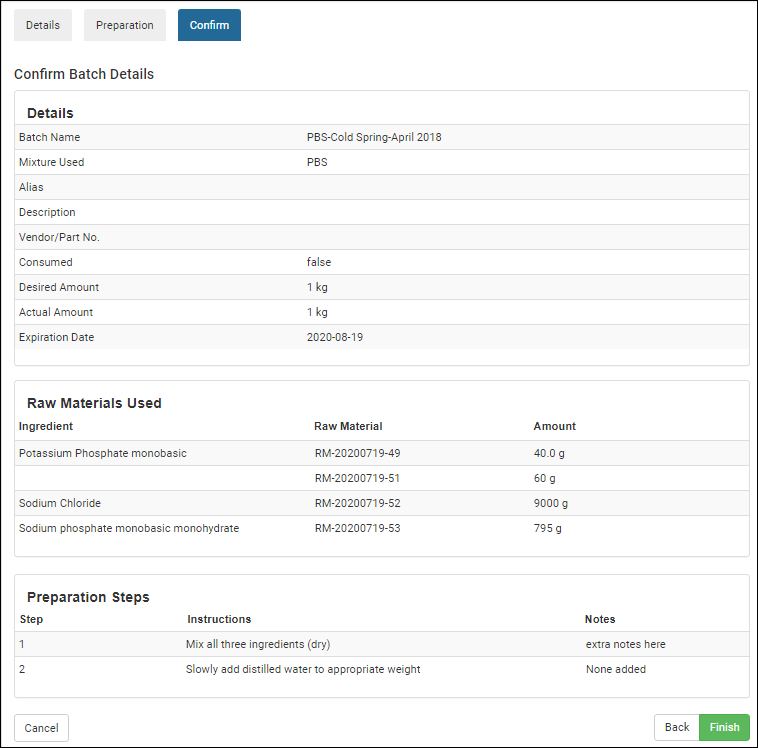

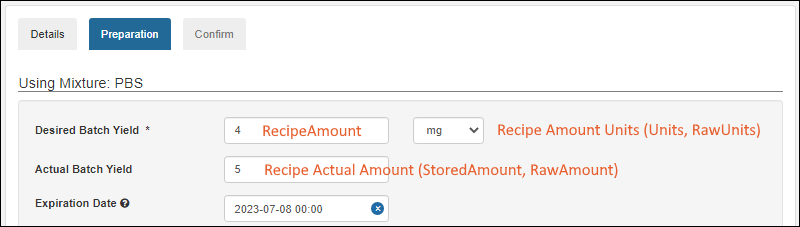

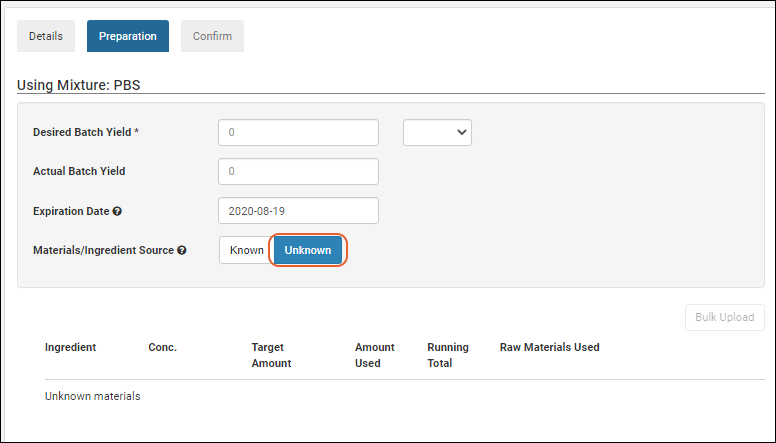

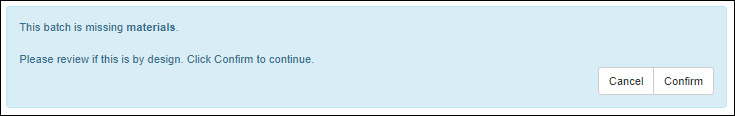

Registering Batches

Biologics Administration

Biologics: Detail Pages and Entry Forms

Biologics: Protect Sequence Fields

Manage Notebook Tags

Biologics Admin: URL Properties

Explore LabKey Biologics with a Trial

LIMS Enterprise

Release Notes: LIMS Enterprise

LIMS Suite

Sample Manager

- See how Sample Manager can level-up your lab work.

- Getting started with Sample Manager

- LabKey Sample Manager - Guided Tours

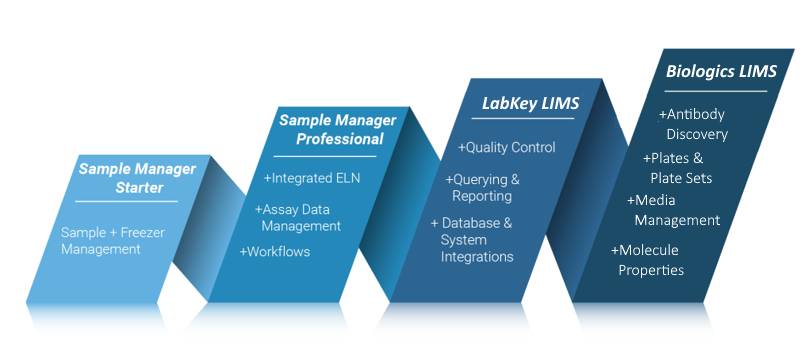

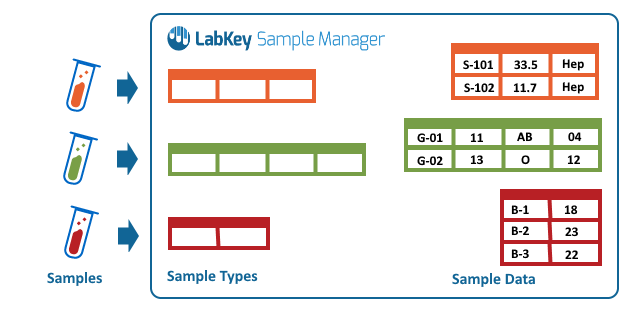

LabKey Sample Manager is one of a suite of LabKey products designed to help you track the entire lifecycle of samples in your lab and all associated research data. The topics in this section will help you learn to use the Starter Edition of Sample Manager. All related products use the same general interface, adding additional functionality at each tier.

Sample Manager Starter Edition

The intuitive interface makes it easy to:- Manage and track your laboratory samples with customizable types and sources.

- Use storage management tools to track locations of samples in freezers and other storage. (Learn more in the documentation.)

- Retain an audit ready log of actions performed on every sample.

Sample Manager Professional Edition

With the Professional Edition of Sample Manager, you will have all of the Starter Edition features, plus be able to:- Create laboratory workflows and standardize sample processing.

- Upload associated assay and experiment result data and link it to your samples.

- Record your work in electronic lab notebooks integrated directly with your data. (Learn more in the documentation.)

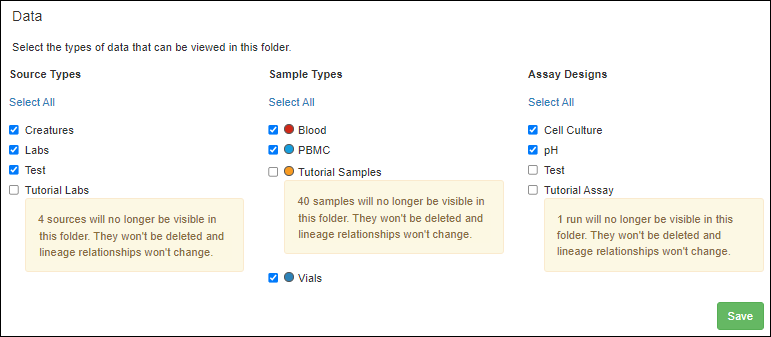

- Partition your data by folder, sharing a storage environment and other resources.

LabKey LIMS

LabKey LIMS includes all features in the Professional Edition of Sample Manager, and adds more, including but not limited to:- Generate customizable reports with ease.

- Add automation with transformation scripts for assay data.

- Customize the downloadable templates available to users importing data

Biologics LIMS

Biologics adds even more functionality to what is available with LabKey LIMS. Learn more here:Topics

- Get Started with Sample Manager

- Sample Manager Dashboard

- Folder Organization

- User Accounts, Groups, and Roles

More Answers

- Release Notes: Sample Manager: What has changed in each version of Sample Manager.

- Sample Manager - FAQ

How Do I Know the Version of my Application

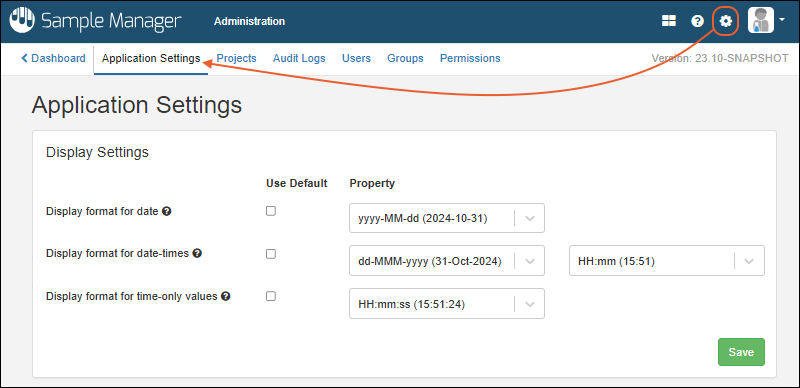

An administrator can see which version is running by selecting > Application Settings. The version is shown in the upper right of every tab in the Administration section.Using Sample Manager with Google Translate

Note that if you are using Google Translate and run into an error similar to the following, it may be due to an "expected" piece of text not appearing in the displayed language at the time the application expects it. Try refreshing the browser or temporarily disabling Google Translate to complete the action.Failed to execute 'removeChild' on 'Node': The node to be removed is not a child of this node.Release Notes: Sample Manager

Release 26.3, March 2026

- LIMS Enterprise Edition is now available.

- Better warnings for unknown fields - when importing cross-sample types, we provide better feedback for unknown fields.

- Improved experience when adding samples to storage - the "Search for Samples" grid now includes the Identifying Fields for a Sample.

- Text Choice options have been increased from 200 items to 500 items.

- Expanded audit coverage - the audit log now captures the creation and editing of grid views.

- Exact text searches now supported using double quotes.

Release 26.2, February 2026

- Column widths now adjust dynamically, allowing more columns to be visible at once with less horizontal scrolling. (docs)

- Configured URL links can now be opened in a new browser tab for easier comparison and multitasking. (docs)

- Entities you don't have access to in lineage views are now shown as restricted rather than being omitted, preserving full context without exposing details. (docs)

- Sample Status is available as a filter for "All Sample Types" in Sample Finder. (docs)

Release 26.1, January 2026

- Support for multiple unit types provides improved inventory and material management. (docs)

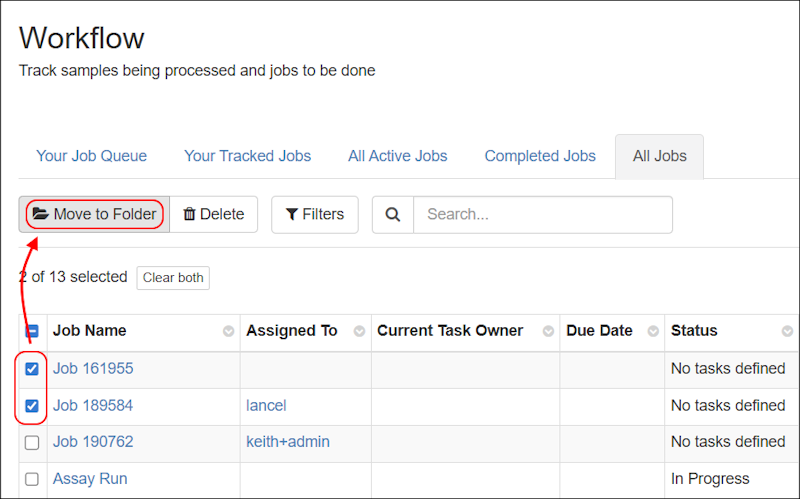

- Move workflow jobs to different folders to better reflect changes in projects or organization. (docs)

- Client APIs can query and update samples using the RowId value; using the LSID value is no longer required.

- Sample names (SampleId) can be updated via a file, when RowId is provided. (docs)

Release 25.12, December 2025

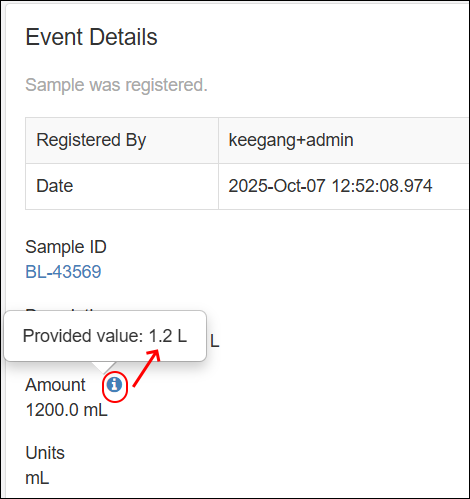

- Amount and Units Fields - Improvements have been made to ensure that the Amounts & Units fields function as paired fields. SM-VAL-25.12.A (docs)

- Negative Amount Values Disallowed - Sample Manager now enforces that the Amounts field cannot have a negative value. SM-VAL-25.12.B (docs)

- Identifying Fields - Identifying fields are now shown in more assay import scenarios. SM-VAL-25.12.C (docs)

Release 25.11, November 2025

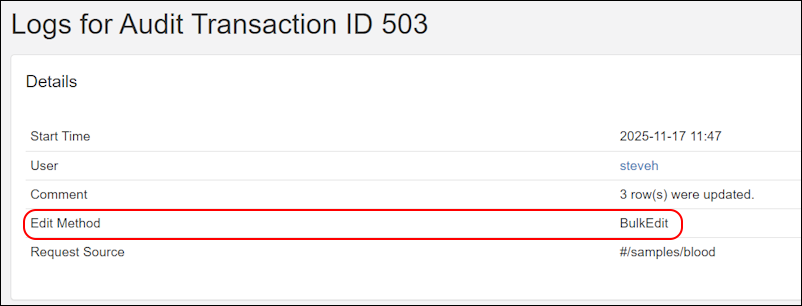

- Audit log captures additional information on the method or webpage location used to insert, update, and delete records. SM-VAL-25.11.A (docs)

- When an ELN notebook is recalled by an administrator, the author will now receive an email notification, improving visibility and timely follow-up. SM-VAL-25.11.B

- The Customize Grid View and Filter pop-up dialogs now list fields alphabetically, making it faster and more intuitive to find and select fields. SM-VAL-25.11.C

- Two factor authentication is available for configuration by LabKey. Consult your Account Manager for changes. SM-VAL-25.11.D

Release 25.10, October 2025

- Amounts and Units Changes - Amount and Unit fields are now enforced as a pair—both must be completed together or left empty. SM-VAL-25.10.A (docs)

- Required Fields in Workflow Jobs - Required fields in workflow jobs are now enforced during job creation, instead of during job completion. SM-VAL-25.10.B (docs)

- Identifying Fields - Administrators can now set up to 6 identifying fields. SM-VAL-25.10.C (docs)

Release 25.9, September 2025

- Improved Audit Logging Behavior - The LabKey Client APIs will now respect the audit level configured by the system to improve adherence to compliance and ease development. When both the system and API parameters specify an auditing level, the higher, more detailed level is applied. (docs) SM-VAL-25.9.A

- Several improvements were made to overall system reliability and performance.

Release 25.8, August 2025

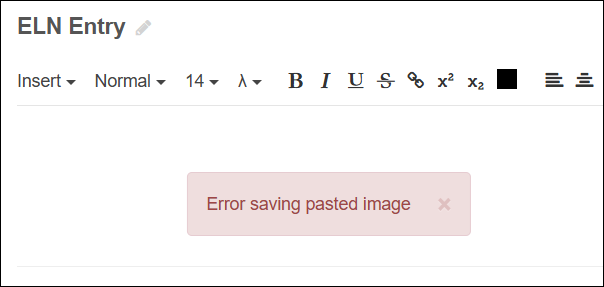

- Improved ELN Editing: ELN editors now get faster feedback when pasting images into an ELN: files pasted into an ELN now fail immediately if they can't be loaded. SM-VAL-25.7-B (docs)

- The CheckedOut date/time stamp is now an available column in sample grids. SM-VAL-25.7-D

- You can now view all audit events for a transaction in one place. SM-VAL-25.8.A (docs)

- The audit log now records original file names when duplicates are automatically renamed. SM-VAL-25.8-B (docs)

Release 25.7, July 2025

- Improvements were made to address overall system reliability and performance.

- Continued investment in automated testing and internal quality checks to support ongoing feature development.

Release 25.7.8, September 2025

- Selection order is retained when editing in a grid. SM-VAL-25.7-A

- Improved ELN Editing: ELN editors now get faster feedback when pasting images into an ELN: files pasted into an ELN now fail immediately if they can't be loaded. SM-VAL-25.7-B (docs)

- Improved import feedback: When attachment fields are supplied with data in a file import or file update for Sources, users will be provided an error message that Attachment data cannot be provided via a file. SM-VAL-25.7-C

- The CheckedOut date/time stamp is now an available column in sample grids. SM-VAL-25.7-D (docs)

- We have addressed an issue with moving assay runs. Moving assay runs that have multiple file fields now associate correctly. SM-VAL-25.7-E

- We have addressed an issue with cross-sample-type import or cross-folder sample import, where the CheckedOut column was being ignored. SM-VAL-25.7-F

- We have addressed an issue with cross-sample-type import and cross-folder sample import, where Yes/No text fields were being inadvertently converted to Boolean values. SM-VAL-25.7-G

- We have addressed an issue where samples being removed from storage could not be assigned a Locked sample status type. SM-VAL-25.7-H

Release 25.6, June 2025

- Lineage details can be used in aliquot naming patterns. SM-VAL-25.6-A (docs)

- Users can enter a reason when they make changes to a Sample Type, Source Type, or Assay Design. SM-VAL-25.6-B (docs)

- Fields of type "Sample" can be set to validate that values already exist in the system. SM-VAL-25.6-C (docs)

- Several improvements were made to address overall system reliability and performance.

Release 25.5, May 2025

- Several improvements were made to address overall system reliability and performance.

- Continued investment in automated testing and internal quality checks to support ongoing feature development.

Release 25.4, April 2025

- Several improvements were made to address overall system reliability and performance.

- Continued investment in automated testing and internal quality checks to support ongoing feature development.

Release 25.3, March 2025

Maintenance Release 25.3.2, April 2025

- Bulk edit grids now show amounts as entered, regardless of selected units.

- Field names longer than 40 characters are now supported, though not recommended.

Release 25.3.0, March 2025

- You can now use numeric positions for boxes, plates, and tube racks (instead of xy coordinates) when that will better match your lab. SM-VAL-25.3-A (docs)

- Improved support for using special characters in column names and data. SM-VAL-25.3-B (docs)

- Updates to our content security policy (CSP) to enforce strong settings that will block serious cybersecurity threats. Administrators can add allowed external resources if needed. SM-VAL-25.3-C (docs)

Release 25.2, February 2025

- The main dashboard has been simplified. SM-VAL-25.2-A (docs)

- The last storage location is remembered on a per sample type basis, making it easier for users who work with different materials to return to the right locations. SM-VAL-25.2-B (docs)

- Multiple Sample or SourceIDs can now be edited in the editable grid. SM-VAL-25.2-C (docs)

- If desired, you can prevent this by disallowing user-defined names. (docs)

- Adding samples to workflow jobs has been streamlined. SM-VAL-25.2-D (docs)

- Workflow jobs, tasks, and notification subscriptions can be assigned by group as well as by individual user. SM-VAL-25.2-E (docs)

Release 25.1, January 2025

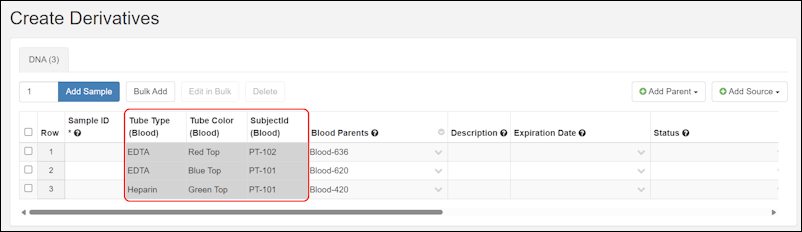

- Creating or deriving samples of multiple types at once can now be done with a streamlined interface. SM-VAL-25.1-A (docs)

- The process of adding lineage details when creating and deriving samples and sources is more consistent. SM-VAL-25.1-B (docs)

- Special characters are not allowed in the names of structures like Sample Types, Source Types, and Assay Designs. SM-VAL-25.1-C (docs)

- When importing new samples and sources from a file, first navigate to the folder where you want to create them. SM-VAL-25.1-D (docs)

Release 24.12, December 2024

- The main dashboard shows "Locations Recently Added To" for easy access to recent storage locations. SM-VAL-24.12-A (docs)

- Improvements in creating and deriving samples and sources let you choose the type and number to create up front. SM-VAL-24.12-B (docs)

- Use identifying fields when editing samples in grids and viewing tooltips during sample creation and derivation. SM-VAL-24.12-C (docs)

- Importing assay data from a workflow job will now only choose relevant sample IDs. SM-VAL-24.12-D (docs)

Release 24.11, November 2024

- Customize which Identifying Fields are shown when users choose samples or sources from dropdowns. SM-VAL-24.11-B (docs)

- Date, time, and datetime fields are now limited to a set of common display patterns, making it easier for users to choose the desired format. SM-VAL-24.11-C (docs)

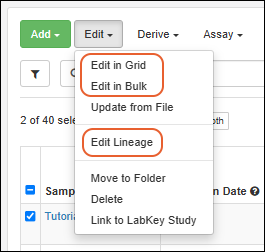

- A simplified interface for editing lineage and storage information replaces the previous additional tabs on editable grids. SM-VAL-24.11-D (docs)

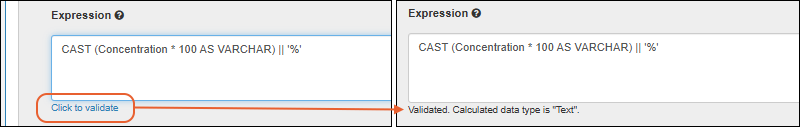

- Include "Calculation" fields in your sample, source, and assay definitions that can perform calculations on any combination of system and user-defined fields. SM-VAL-24.11-A (docs)

- Folders that are no longer in use can be archived to hide them from view. SM-VAL-24.11-E (docs)

- An administrator may amend any notebook. SM-VAL-24.11-F (docs)

Release 24.10, October 2024

- Lineage relationships can now be marked as required when creating or updating samples or sources. SM-VAL-24.10-A (docs)

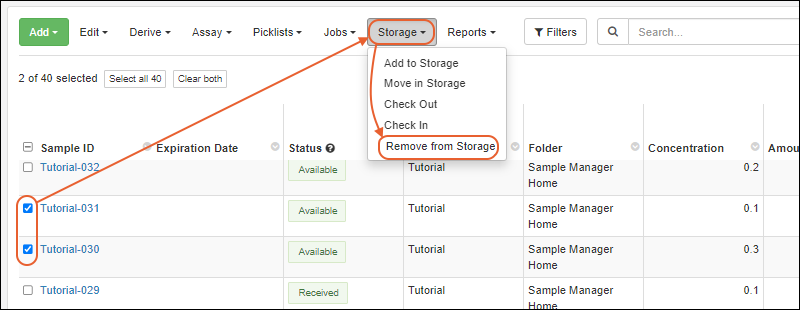

- The term "Remove" is now used instead of "Discard" when a sample is taken out of a storage system. SM-VAL-24.10-B (docs)

- The term "Folder" is now used instead of "Project" to describe a subcontainer or partition of data within the application. All data scoping, user access, and configuration options are unchanged. SM-VAL-24.10-C (docs)

Release 24.9, September 2024

- More easily create storage during sample import by adding labels on terminal storage units. SM-VAL-24.9-A (docs)

- Make naming samples easier with the ability to name your samples based on source or sample ancestors regardless of hierarchy. SM-VAL-24.9-B (docs)

- When adding samples to storage, you can use the Sample Creation Order, i.e. the "reverse" of the default grid order. SM-VAL-24.9-C (docs | docs)

- Resolved an issue with editing samples with mixed parent sample or source IDs. In certain scenarios, where projects were in use, samples being edited in the grid with sample or source parents whose sample IDs were a mixture of numeric and non numeric, lineage was unintentionally removed. SM-VAL-24.7.5-J

Release 24.8, August 2024

- More easily get your sample templates to BarTender by exporting the "BarTender Template". SM-VAL-24.8-A (docs)

- Editable grids now validate entered values as you go, rather than waiting for save to check all entries. SM-VAL-24.8-B (docs)

- Resolved an issue with moving boxes and deleting storage hierarchy. In certain scenarios, boxes containing samples were unintentionally deleted when their previous location was simultaneously deleted while they were being moved. SM-VAL-24.7.3-H

Release 24.7, July 2024

Maintenance Release 24.7.5, September 2024

- Resolved an issue with editing samples with mixed parent sample or source IDs. In certain scenarios, where projects were in use, samples being edited in the grid with sample or source parents whose sample IDs were a mixture of numeric and non numeric, lineage was unintentionally removed. SM-VAL-24.7.5-J

Maintenance Release 24.7.3, August 2024

- Resolved an issue with moving boxes and deleting storage hierarchy. In certain scenarios, boxes containing samples were unintentionally deleted when their previous location was simultaneously deleted while they were being moved. SM-VAL-24.7.3-H

Release 24.7.0, July 2024

- The storage dashboard now lists recently used freezers first, and loads the first ten by default, making navigating large storage systems easier for users. SM-VAL-24.7-A (docs)

- New roles, Sample Type Designer and Source (Data Class) Designer, improve the ability to customize the actions available to individual users. SM-VAL-24.7-B (docs)

- Fields with a description, shown in a tooltip, now show an icon to alert users that there is more information available. SM-VAL-24.7-C (docs)

- Lineage across multiple Sample Types can be viewed using the "Ancestor" node on the "All Samples" tab of the grid. SM-VAL-24.7-D (docs)

- Editable grids support the locking of column headers and sample identifier details making it easier to tell which cell is being edited. SM-VAL-24.7-F (docs)

- Exporting data from a grid while data is being edited is no longer supported. SM-VAL-24.7-E (docs)

- Workflow jobs cannot be deleted if they are referenced from a notebook. SM-VAL-24.7-G (docs)

Release 24.6, June 2024

- More easily identify if a file wasn't uploaded with an "Unavailable File" indicator. SM-VAL-24.6-A (docs)

- The process of adding samples to storage has been streamlined to make it clearer that the preview step is only showing current contents. SM-VAL-24.6-B (docs)

- Customize the certification language used during ELN signing to better align with your institution's requirements. SM-VAL-24.6-C (docs)

- Workflow templates can now be copied to make it easier to generate similar templates. SM-VAL-24.6-D (docs)

Release 24.5, May 2024

- Assign custom colors to sample statuses to more easily distinguish them at a glance. SM-VAL-24.5-A (docs)

- Easily download and print a box view of your samples to share or assist in using offline locations like some freezer "farms". SM-VAL-24.5-B (docs)

- Use any user-defined Sample properties in the Sample Finder. SM-VAL-24.5-C (docs)

- More easily understand Notebook review history and changes including recalls, returns for changes, and more in the Review Timeline panel. SM-VAL-24.5-D (docs)

- Administrators can require a reason when a notebook is recalled. SM-VAL-24.5-E (docs)

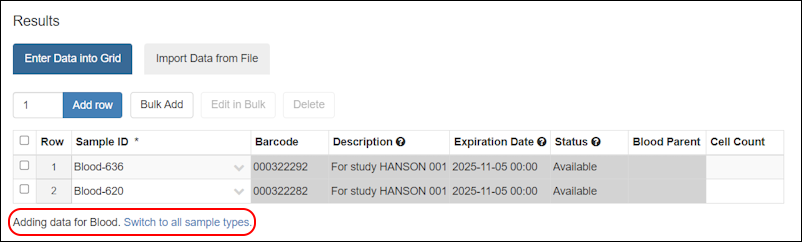

- Add samples to any project without first having to navigate there. SM-VAL-24.5-F (docs)

- Edit samples across multiple projects, provided you have the appropriate permissions. SM-VAL-24.5-G (docs)

Release 24.4, April 2024

- Maintenance release 24.4.1 addresses an issue with uploading files during bulk editing of Sample data. SM-VAL-24.4.1-K

- Users can better comply with regulations by entering a Reason for Update when editing sample, source or assay data. SM-VAL-24.4-A (docs)

- More quickly find the location you need when adding samples to storage (or moving them) by searching for storage units by name or label. SM-VAL-24.4-B (docs)

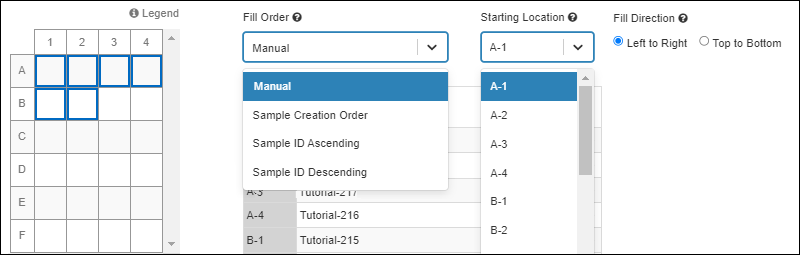

- Choose either an automatic (specifying box starting position and Sample ID order) or manual fill when adding samples to storage or moving them. SM-VAL-24.4-C (docs)

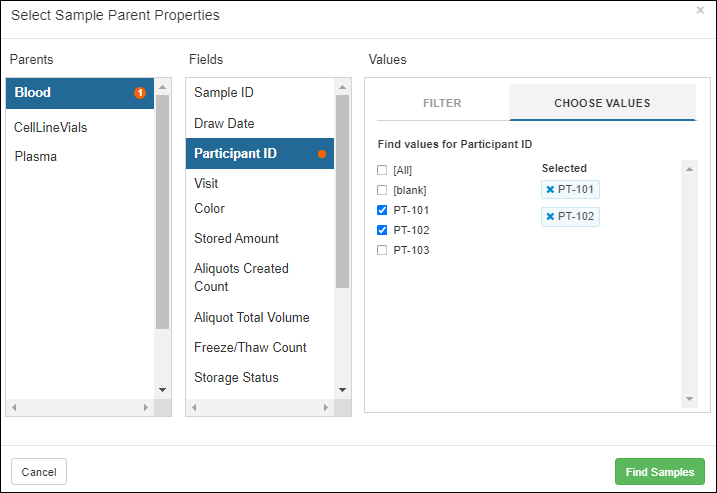

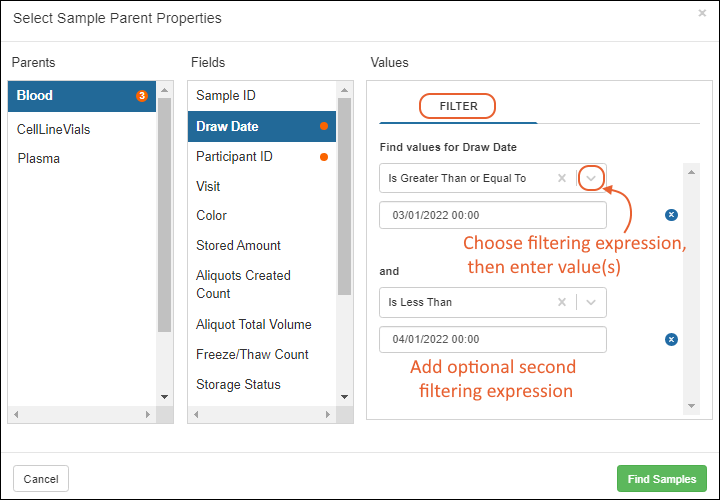

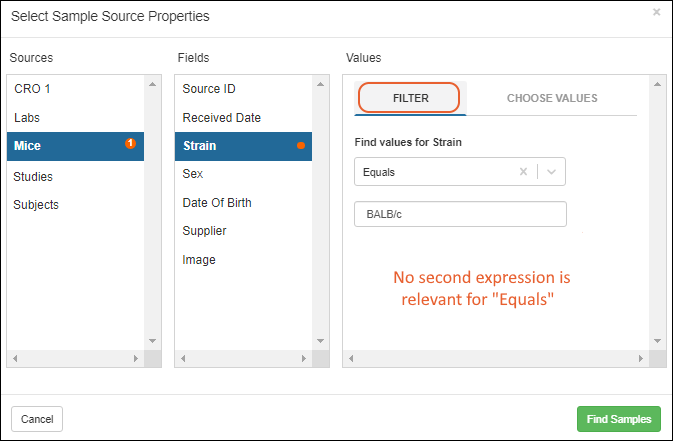

- More easily find samples in Sample Finder by searching with user-defined fields in sample parent and source properties. SM-VAL-24.4-D (docs)

- Lineage graphs have been updated to reflect "generations" in horizontal alignment, rather than always showing the "terminal" level aligned at the bottom. SM-VAL-24.4-E (docs)

- Hovering over a column label will show the underlying column name. SM-VAL-24.4-F (docs)

- Up to 20 levels of lineage can be displayed using the grid view customizer. SM-VAL-24.4-G (docs)

- Administrators of the Professional Edition can set the application to require users to provide reasons for updates as well as other actions like deletions. SM-VAL-24.4-H (docs)

- Moving entities between projects is easier now that you can select from multiple projects simultaneously for moves to a new one. SM-VAL-24.4-J (docs)

Sample Manager User Conference - March 28, 2024

Watch the session recordings to hear what's new in Sample Manager, best practices for using key features, and other users sharing how Sample Manager is used in their labs.

Watch the session recordings to hear what's new in Sample Manager, best practices for using key features, and other users sharing how Sample Manager is used in their labs.

Release 24.3, March 2024

Maintenance Release 24.3.5, May 2024

- Resolved an issue with importing across Sample Types in certain time zones. Date, datetime, and time fields were sometimes inconsistently translated.

- Resolved an issue with uploading files during bulk editing of Sample data.

Version 24.3.0, March 2024

- Storing or moving samples to a single box now allows you to choose the order in which to add them as well as the starting position within the box. (docs)

- When discarding a sample, by default the status will change to "Consumed". Users can adjust this as needed. (docs)

- While browsing into and out of storage locations, the last path into the hierarchy will be retained so that the user returns to where they were previously. (docs)

- The storage location string can be copied; pasting outside the application will include the slash separators, making it easier to populate a spreadsheet for import. (docs)

- The icons and are now used for expanding and collapsing sections, replacing and . (docs)

- Assay run fields can now use the datatypes "Date" or "Time". (docs)

- The ":withCounter" naming pattern modifier is now case-insensitive. (docs)

- Workflow templates can be edited before any jobs are created from them, including updating the tasks and attachments associated with them. (docs)

Release 24.2, February 2024

- In Sample Finder, use the "Equals All Of" filter to search for Samples that share up to 10 common Sample Parents or Sources. (docs)

- Include fields of type "Date" or "Time" in Samples, Sources, and Assay Results. (docs)

- Users can now sort and filter on the Storage Location columns in Sample grids. (docs)

- Sample types can be selectively hidden from the Insights panel on the dashboard, helping you focus on the samples that matter most. (docs)

- The Sample details page now clarifies that the "Source" information shown there is only one generation of Source Parents. For full lineage details, see the "Lineage" tab. (docs)

- Up to 10 levels of lineage can be displayed using the grid view customizer. (docs)

- Menu and dashboard language is more consistent about shared team resources vs. your own items. (docs)

- The application can be configured to require reasons (previously called "comments") for actions like deletion and storage changes. Otherwise providing reasons is optional. (docs)

- Developers can generate an API key for accessing client APIs from scripts and other tools. (docs)

Release 24.1, January 2024

- A banner message within the application links you directly to these release notes to help you learn what's new in the latest version. (docs)

- Search for samples by storage location. Box names and labels are now indexed to make it easier to find storage in larger systems. (docs)

- Only an administrator can delete a storage system that contains samples. Non-admin users with permission to delete storage must first remove the samples from that storage in order to be able to delete it. (docs)

- Electronic Lab Notebook enhancements:

- All notebook signing events require authentication using both username and password. Also available in version 23.11.4. (docs | docs)

- From the notebook details panel, see how many times a given item is referenced and easily open its details or find it in the notebook. (docs)

- Workflow jobs can be referenced from Electronic Lab Notebooks. (docs)

- Editing during ELN is fixed after an issue on Chromium-based browsers (eg. Chrome, Edge) caused users to experience jumpiness during notebook authoring. Also available in version 23.11.7 and 24.1.2.

Release 23.12, December 2023

- Samples can be moved to multiple storage locations at once. (docs)

- New storage units can be created when samples are added to storage. (docs)

- The table of contents for a notebook now includes headings and day markers from within document entries. (docs)

- Workflow templates can now have editable assay tasks allowing common workflow procedures to have flexibility of the actual assay data needed. (docs)

- When samples or aliquots are updated via the grid, the units will be correctly maintained at their set values. This addresses an issue in earlier releases and is also fixed in the 23.11.2 maintenance release.

Release 23.11, November 2023

- Moving samples between storage locations now uses the same intuitive interface as adding samples to new locations. (docs)

- Sample grids now include a direct menu option to "Move Samples in Storage". (docs)

- Standard assay designs can be renamed. (docs)

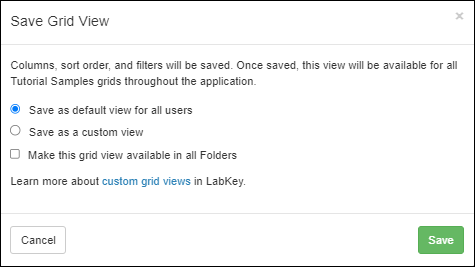

- Users can share saved custom grid views with other users. (docs)

- Authorized users no longer need to navigate to the home project to add, edit, and delete data structures including Sample Types, Registry Source Types, Assay Designs, and Storage. Changes can be made from within a subproject, but still apply to the structures in the home project. (docs)

- The interface has changed so that the 'cancel' and 'save' buttons are always visible to the user in the browser. (docs)

Release 23.10, October 2023

- Adding Samples to Storage is easier with preselection of a previously used location, and the ability to select which direction to add new samples: top to bottom or left to right, the default. (docs)

- When Samples or Sources are created manually, any import aliases that exist will be included as parent fields by default, making it easier to set up expected lineage relationships. (docs)

- Grid settings are now persistent when you leave and return to a grid, including which filters, sorts, and paging settings you were using previously. (docs)

- Header menus have been reconfigured to make it easier to find administration, settings, and help functions throughout the application. (docs)

- Panels of details for Sample Types, Sources, Storage, etc. are now collapsed and available via hover, putting the focus on the data grid itself. (docs)

Release 23.9, September 2023

- View all samples of all types from a new dashboard button. (docs)

- When adding samples to storage, users will see more information about the target storage location including a layout preview for boxes or plates. (docs)

- Longer storage location paths will be 'summarized' for clearer display in some parts of the application. (docs)

- Naming patterns can now incorporate a sampleCount or rootSampleCount element. (docs)

Release 23.8, August 2023

- Sources can have lineage relationships, enabling the representation of more use cases. (docs)

- Two new calculated columns provide the "Available" aliquot count and amount, based on the setting of the sample's status. (docs)

- The amount of a sample can be updated easily during discard from storage. (docs)

- Customize the display of date/time values on an application wide basis. (docs)

- The aliquot naming pattern will be shown in the UI when creating or editing a sample type. (docs)

- The allowable box size for storage units has been increased to accommodate common slide boxes with up to 50 rows. (docs)

- Options for saving a custom grid view are clearer. (docs)

Release 23.7, July 2023

- Define locations for storage systems within the app. (docs)

- Create new freezer hierarchy during sample import. (docs)

- Import & update samples across multiple sample types from a single file. (docs)

- Administrators have the ability to do the actions of the Storage Editor role. (docs)

- Improved options for bulk populating editable grids, including better "drag-fill" behavior and multi-cell cut and paste. (docs | docs)

- Enhanced security by removing access or identifiers to data in different projects in lineage, sample timeline and ELNs. (docs)

- ELN Improvements:

- The mechanism for referencing something from an ELN has changed to be @ instead of /. (docs)

- Autocompletion makes finding objects to reference easier. (docs)

- Move Assay Runs and Notebooks between Projects. (docs | docs)

Release 23.6, June 2023

- See an indicator in the UI when a sample is expired. (docs)

- On Sample grids in Picklists, Workflows & Source Details pages, when only one Sample Type is included in a grid that supports multiple Sample Types, default to that tab instead of to the general "All Samples" tab. (docs)

- Track the edit history of Notebooks. (docs)

- Control which data structures and storage systems are visible in which Projects. (docs)

- Move Sources between Projects. (docs)

- Project menu includes quick links to settings and the dashboard for that Project. (docs)

Release 23.5, May 2023

- Move Samples between Projects. (docs)

- Assay Results grids can be modified to show who created and/or modified individual result rows and when. (docs)

- BarTender templates can only be defined in the home Project. (docs)

- ELN Improvements: Pagination controls are available at the bottom of the ELN dashboard. (docs)

- A new "My Tracked Jobs" option helps users follow workflow tasks of interest, even when they are not assigned tasks. (docs)

Release 23.4, April 2023

- Add the sample amount during sample registration to better align with laboratory processes. (docs | docs)

- StoredAmount, Units, RawAmount, and RawUnits field names are now reserved. (docs)

- Users with a significant number of samples in storage may see slower than usual upgrade times due to migrating these fields. Any users with existing custom fields for providing amount or units during sample registration are encouraged to migrate to the new fields prior to upgrading.

- Use the Sample Finder to find samples by sample properties, as well as by parent and source properties. (docs)

- Built in Sample Finder reports help you track expiring samples and those with low aliquot counts. (docs)

- Text search result pages are more responsive and easier to use. (docs)

- Administrators can specify a default BarTender template. (docs)

- ELN Improvements: View archived notebooks. (docs)

- Sample Status values can only be defined in the home project. Existing unused custom status values in sub-projects will be deleted and if you were using projects and custom status values prior to this upgrade, you may need to contact us for assistance. (docs)

Release 23.3, March 2023

- Samples can have expiration dates, making it possible to track expiring inventories. (docs)

- If your samples already have expiration dates, contact your Account Manager for help migrating to the new fields.

- Administrators can see the which version of Sample Manager they are running. (docs)

- ELN Improvements:

Potential Backwards Compatibility Issue: In 23.3, we added the materialExpDate field to support expiration dates for all samples. If you happen to have a custom field by that name on any Sample Type, you should rename it prior to upgrading to avoid loss of data in that field.

Release 23.2, February 2023

- Clearly capture why any data is deleted with user comments upon deletion. (docs | docs)

- Data update (or merge) via file has moved to the "Edit" menu of a grid. Importing from file on the "Add" menu is only for adding new data rows. (docs | docs | docs)

- Use grid customization after finding samples by ID or barcode, making it easier to use samples of interest. (docs)

- Electronic Lab Notebooks now include a full review and signing event history in the exported PDF, with a consistent footer and entries beginning on the second page of the PDF. (docs)

- Projects in the Professional Edition of Sample Manager are more usable and flexible.

- Data structures like Sample Types, Source Types, Assay Designs, and Storage Systems must always be created in the top level home project. (docs)

Release 23.1, January 2023

- Storage management has been generalized to clearly support non-freezer types of sample storage. (docs)

- Samples will be added to storage in the order they appear in the selection grid. (docs)

- Curate multiple BarTender label templates, so that users can easily select the appropriate format when printing. (docs)

- Electronic Lab Notebook enhancements:

- To submit a notebook for review, or to approve a notebook, the user must provide an email and password during the first signing event to verify their identity. (docs | docs)

- Set the font-size and other editing updates. (docs)

- Sort Notebooks by "Last Modified". (docs)

- Find all ELNs created from a given template. (docs)

- An updated main menu making it easier to access resources across projects. (docs)

- Easily update Assay Run-level fields in bulk instead of one run at a time. (docs)

Release 22.12, December 2022

- The Professional Edition supports multiple Sample Manager Projects. (docs)

- Improved interface for assay design and data import. (docs | docs)

- From assay results, select sample ID to examine derivatives of those samples in Sample Finder. (docs)

Release 22.11, November 2022

- Add samples to multiple freezer storage locations in a single step. (docs)

- Improvements in the Storage Dashboard to show all samples in storage and recent batches added by date. (docs)

- View all assay results for samples in a tabbed grid displaying multiple sample types. (docs)

- ELN improvements to make editing and printing easier with a table of contents highlighting all notebook entries and fixed width entry layout, plus new formatting symbols and undo/redo options. (docs)

- New role available: Workflow Editor, granting the ability to create and edit workflow jobs and picklists. (docs)

- Notebook review can be assigned to a user group, supporting team workload balancing. (docs)

Release 22.10, October 2022

- Use sample ancestors in naming patterns, making it possible to create common name stems based on the history of a sample. (docs)

- Additional entry points to Sample Finder. Select a source or parent and open all related samples in the Sample Finder. (docs | docs)

- Video Tutorial: Sample Finder

- New role available: Editor without Delete. Users with this role can read, insert, and update information but cannot delete it. (docs)

- Group management allowing permissions to be managed at the group level instead of always individually. (docs | docs)

- With the Professional Edition, use assay results as a filter in the sample finder helping you find samples based on characteristics like cell viability. (docs)

- Assay run properties can be edited in bulk. (docs)

Release 22.9, September 2022

- Searchable, filterable, standardized user-defined fields on workflow enable teams to create structured requests for work, define important billing codes for projects and eliminate the need for untracked email communication. (docs)

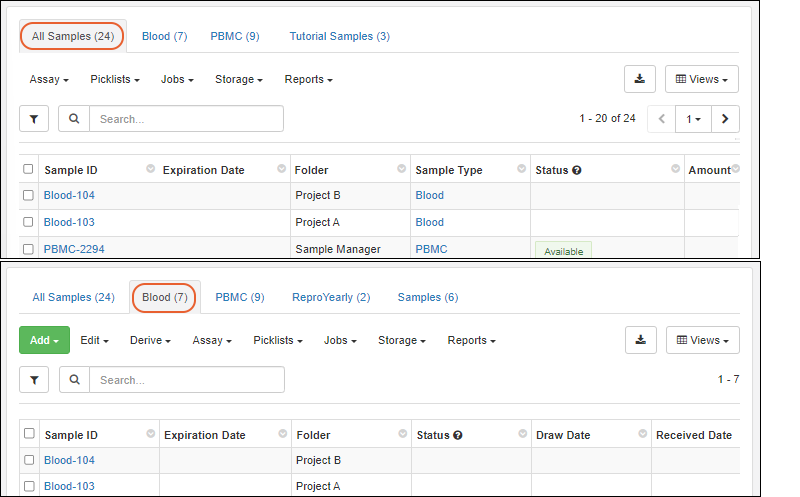

- Storage grids and sample search results now show multiple tabs for different sample types. With this improvement, you can better understand and work with samples from anywhere in the application. (docs | docs | docs)

- The leftmost column of sample data, typically the Sample ID, is always shown as you examine wide datasets, making it easy to remember what sample's data you were looking at. (docs)

- By prohibiting sample deletion when they are referenced in an ELN, Sample Manager helps you further protect the integrity of your data. (docs)

- Easily capture amendments to signed Notebooks when a discrepancy is detected to ensure the highest quality entries and data capture, tracking the events for integrity. (docs)

- When exploring a Sample of interest, you can easily find and review any associated notebooks. (docs)

Release 22.8, August 2022

- Aliquots can have fields that are not inherited from the parent sample. Administrators can control which parent sample fields are inherited and which can be set independently for the sample and aliquot. (docs)

- Drag within editable grids to quickly populate fields with matching strings or number sequences. (docs)

- When exporting a multi-tabbed grid to Excel, see sample counts and which view will be used for each tab. (docs)

Release 22.7, July 2022

- Our user-friendly ELN (Electronic Lab Notebook) is designed to help scientists efficiently document their experiments and collaborate. This data-connected ELN is seamlessly integrated with other laboratory data in the application, including lab samples, assay data and other registered data. (docs)

- Make manifest creation and reporting easier by exporting sample types across tabs into a multi tabbed spreadsheet. (docs)

- All users can now create their own named custom view of grids for optimal viewing of the data they care about. Administrators can customize the default view for everyone. (docs)

- Create a custom view of your data by rearranging, hiding or showing columns, adding filters or sorting data. (docs)

- With saved custom views, you can view your data in multiple ways depending on what’s useful to you or needed for standardized, exportable reports and downstream analysis. (docs)

- Customized views of the audit log can be added to give additional insight. (docs)

- Export data from an 'edit in grid' panel, particularly useful in assay data imports for partially populating a data 'template'. (docs | docs)

- Newly surfaced Picklists allow individuals and teams to create sharable sample lists for easy shipping manifest creation and capturing a daily work list of samples. (docs)

- Updated main dashboard providing quick access to notebooks in the Professional Edition of Sample Manager. (docs)

- Samples can now be renamed in the case of a mistake; all changes are recorded in the audit log and sample ID uniqueness is still required. (docs)

- The column header row is 'pinned' so that it remains visible as you scroll through your data. (docs)

- Deleting samples from the system entirely when necessary is now available from more places, including the Samples tab for a Source. (docs)

Release 22.6, June 2022

- Save time looking for samples and create standard sample reports by saving your Sample Finder searches to access later. (docs)

- Support for commas in Sample and Source names. (docs)

- Administrators will see a warning when the number of users approaches the limit for your installation. (docs)

Release 22.5, May 2022

- Updated grid menus: Sample grids now help you work smarter (not harder) by highlighting actions you can perform on samples and grouping them to make them easier to discover and use. (docs)

- Revamped grid filtering and enhanced column header options for more intuitive sorting, searching and filtering. (docs)

- Sort and filter based on 'lineage metadata', bringing ancestor information (Source and Parent details) into sample grids (docs)

- Rename Source Types and Sample Types to support flexibility as your needs evolve. Names/SampleIDs of existing samples and sources will not be changed. (docs)

- Descriptions for workflow tasks and jobs can be multi-line when you use Shift-Enter to add a newline. (docs)

Release 22.4, April 2022

- In the Sample Finder, apply multiple filtering expressions to a given column of a parent or source type. (docs)

- Download templates from more places, making it easier to import samples, sources, and assay data from files. (docs)

Release 22.3, March 2022

- Sample Finder: Find samples based on source and parent properties, giving users the flexibility to locate samples based on relationships and lineage details. (docs)

- Redesigned main dashboard featuring storage information and prioritizing what users use most. (docs)

- Updated freezer overview panel and dashboards present storage summary details. (docs)

- Available freezer capacity is shown when navigating freezer hierarchies to store, move, and manage samples. (docs | docs)

- Storage labels and descriptions give users more ways to identify their samples and storage units. (docs)

Release 22.2, February 2022

- New Storage Editor and Storage Designer roles, allowing admins to assign different users the ability to manage freezer storage and manage sample and assay definitions. (docs)

- Note that users with the "Administrator" and "Editor" role no longer have the ability to edit storage information unless they are granted one of these new storage roles.

- Multiple permission roles can be assigned to a new user at once. (docs)

- Sample Type Insights panel summarizes storage, status, etc. for all samples of a type. (docs)

- Sample Status options are shown in a hover legend for easy reference. (docs)

- When a sample is marked as "Consumed", the user will be prompted to also change it's storage status to "Discarded" (and vice versa). (docs | docs)

- User-defined barcodes in integer fields can also be included in sample definitions and search-by-barcode results. (docs)

- Search menu includes quick links to search by barcode or sample ID. (docs)

- See and set the value of the "genId" counter for naming patterns. (docs)

Release 22.1, January 2022

- A new Text Choice data type lets admins define a set of expected text values for a field. (docs)

- Naming patterns will be validated during sample type definition. (docs)

- Editable grids include visual indication when a field offers dropdown choices. (docs)

- Add freezer storage units in bulk. (docs)

- User-defined barcodes can be included in Sample Type definitions as text fields and are scanned when searching samples by barcode. (docs | docs)

- If any of your Sample Types include samples with only strings of digits as names, these could have overlapped with the "rowIDs" of other samples, producing unintended results or lineages. With this release, such ambiguities will be resolved by assuming that a sample name has been provided. (docs)

Release 21.12, December 2021

- The Sample Count by Status graph on the main dashboard now shows samples by type (in bars) and status (using color coding). Click through to a grid of the samples represented by each block. (docs)

- Grids that may display multiple Sample Types, such as picklists, workflow tasks, etc. offer tabs per sample type, plus a consolidated list of all samples. This enables actions such as bulk sample editing from mixed sample-type grids. (picklists | tasks | sources)

- Improved display of color coded sample status values. (docs)

- Include a comment when updating storage amounts or freeze/thaw counts. (docs)

- Workflow tasks involving assays will prepopulate a grid with the samples assigned to the job, simplifying assay data entry. (docs)

Release 21.11, November 2021

- Archive an assay design so that new data is disallowed, but historic data can be viewed. (docs)

- Manage sample status, including but not limited to: available, consumed, locked. (docs)

- An additional reserved field "SampleState" has been added to support this feature. If your existing Sample Types use user defined fields for recording sample status, you will want to migrate to using the new method.

- Incorporate lineage lookups into sample naming patterns (docs)

- Assign a prefix to be included in the names of all Samples and Sources created in a given project. Removed in version 22.12.

- Prevent users from creating their own IDs/Names in order to maintain consistency using defined naming patterns (docs)

Release 21.10, October 2021

- Customize the aliquot naming pattern. (docs)

- Sample names can incorporate field-based counters using :withCounter syntax. (docs)

- Record the physical location of freezers you manage, making it easier to find samples across distributed sites. (docs)

- Manage all samples and aliquots created from a source more easily. (docs)

- Comments on workflow job tasks can be formatted in markdown and multithreaded. (docs)

- Redesigned job tasks page. (docs)

Release 21.9, September 2021

- Edit sources and parents of samples in bulk

- View all samples (and aliquots) created from a given Source

- Lineage graphs changed to show up to 5 generations instead of 3

- Redesigned job overview page

Release 21.8, August 2021

- Aliquot views available at the parent sample level. See aggregate volume and count of aliquots and sub-aliquots.

- Find Samples using barcodes or sample IDs

- Import aliases for sample Sources and Parents are shown in the Sample Type details and included in downloaded templates.

- Improved interface for managing workflow jobs and templates.

Release 21.7, July 2021

- Sample actions can be initiated from Freezer views

- Sample actions can be initiated from Source detail views

Release 21.6, June 2021

- Create Aliquots of samples singly or in bulk

- Create and manage Picklists of samples to simplify operations on groups of samples

- Track movement of storage units within a freezer, or to another freezer

- Minor change in freezer editing behavior that the hierarchy is initially collapsed.

Release 21.5, May 2021

- Copy a Freezer definition

- Samples and Sources can include images and other file attachments

Release 21.4, April 2021

- Barcode generation for Samples

- When inferring fields to create Sources, Sample Types, and Assay Designs from a spreadsheet, any "reserved" fields present will not be shown in the inferral, simplifying the creation process

- When importing data, any unrecognized fields will be ignored and a banner will be shown

- On the main menu, "My Assigned Work" has been renamed "My Queue"

Release 21.3, March 2021

- A new Tube Rack type of freezer storage unit has been added

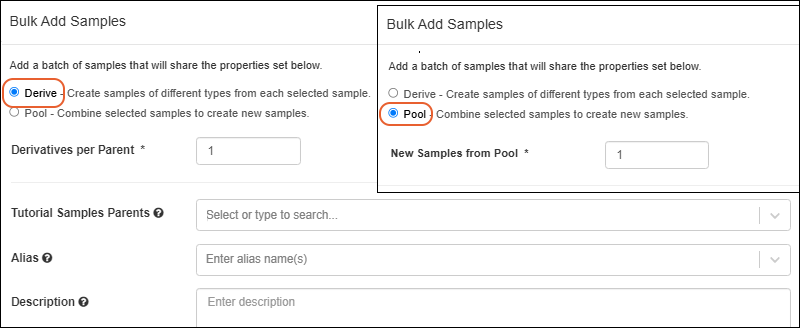

- Derive samples from one or more parent samples

- Pool multiple samples into a set of new samples

- The field editor offers a new "Summary" view

Release 21.2, February 2021

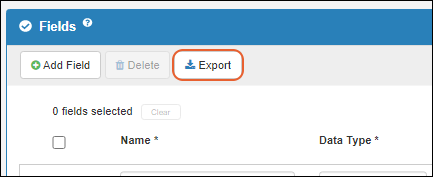

- The field editor now includes checkboxes to enable deletion of multiple fields and export of subsets of fields.

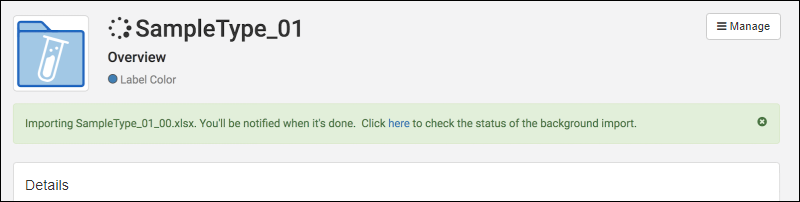

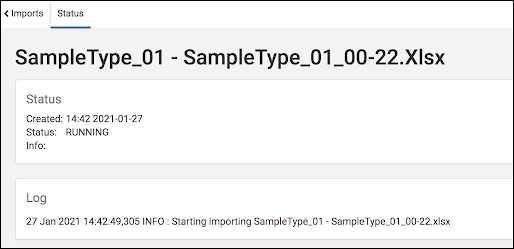

- Background Import:

- Removal of previous size limits on data import

- Progress reporting for asynchronous imports

- In-app Notifications when background imports are complete

Release 21.1, January 2021

- Freezer Management

- Match your digital storage to your physical storage

- Store and locate samples with ease

- Monitor usage and capacity in graphical dashboards

- Track volume, freeze/thaw counts, and comments during check in/out events

Release 20.12, December 2020

- Detailed audit logging now shows only what has changed when data is updated.

Release 20.11, November 2020

- Batching of creating samples and sources, enabling selection of "just created" items.

Release 20.10, October 2020

Release 20.9, September 2020

- Label colors are shown in the samples section of the main dashboard.

- In anticipation of future support for Freezer Management, underlying functionality like the ability to access storage locations from the main menu have been added.

Release 20.8, August 2020

- Sample Types can have custom Label Color assignments to help users differentiate them. (docs)

- In anticipation of future support for Freezer Management, underlying functionality like the ability to see the storage location of a sample has been added. These facilities are not yet visible in the application interface.

Release 20.7, July 2020

- Improved search experience. Filter and refine search results. (docs)

Release 20.6, June 2020

- Bug fixes and small improvements

Release 20.5, May 2020

- Use Sample Timelines to track all events involving a given sample.

- Detailed audit logging has been improved for samples, under the new heading "Sample Timeline Events."

- Sample Types can be created by inferring fields from a file, or by defining fields manually. Source types offer the same convenience.

- Editing of sample parents is now available.

- The definition of Sample Types can now include "Source Alias" columns, similar to parent aliases already available.

Release 20.4, April 2020

- The creation interface for Sample Types has been merged to a single page showing both properties and fields. This makes it easier to create naming expressions that use fields in your Sample Type.

- Define Sources for your samples. The source of a sample could be an individual or a cell line or a lab. Tracking metadata about the source of samples, both biological and physical, can unlock new insights.

Release 20.3, March 2020

- Samples can be added to a workflow job during job creation. You no longer need to start a job after selecting samples of interest, but can add or update the samples directly within the job editing interface.

- Removing unnecessary fields is easier with an icon shown in the collapsed field view.

February 2020

- LabKey Sample Manager is Launched!

The symbol indicates a feature available in the Professional Edition of Sample Manager or with a Premium Edition of LabKey Server.

Release Notes: LabKey LIMS

- Generate customizable reports with ease.

- Add automation with transformation scripts for assay data.

Release 26.2, February 2026

- Column widths now adjust dynamically, allowing more columns to be visible at once with less horizontal scrolling. (docs)

- Configured URL links can now be opened in a new browser tab for easier comparison and multitasking. (docs)

- Entities you don't have access to in lineage views are now shown as restricted rather than being omitted, preserving full context without exposing details. (docs)

- Sample Status is available as a filter for "All Sample Types" in Sample Finder. (docs)

Release 26.1, January 2026

- Support for multiple unit types provides improved inventory and material management. (docs)

- Move workflow jobs to different folders to better reflect changes in projects or organization. (docs)

- Workflow tasks now support sample filters, allowing you to control which samples are included at each step. (docs)

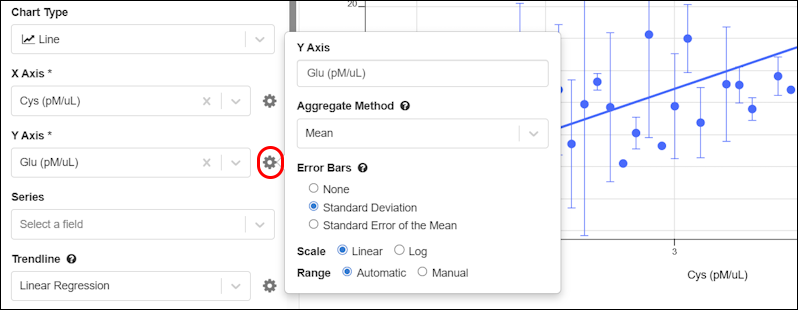

- Improved plot customization with new layout, axis, size, color, and per-series line controls. (docs)

- Client APIs can query and update samples using the RowId value; using the LSID value is no longer required.

- Sample names (SampleId) can be updated via a file, when RowId is provided.

Release 25.12, December 2025

- Amount and Units Fields - Improvements have been made to ensure that the Amounts & Units fields function as paired fields. (docs)

- Negative Amount Values Disallowed - Sample Manager now enforces that the Amounts field cannot have a negative value.(docs)

- Identifying Fields - Identifying fields are now shown in more assay import scenarios. (docs)

Release 25.11, November 2025

- Audit log captures the method used to insert, update, and delete records. (docs)

- When an ELN notebook is recalled by an administrator, the author will now receive an email notification, improving visibility and timely follow-up.

- The Customize Grid View and Filter pop-up dialogs now list fields alphabetically, making it faster and more intuitive to find and select fields.

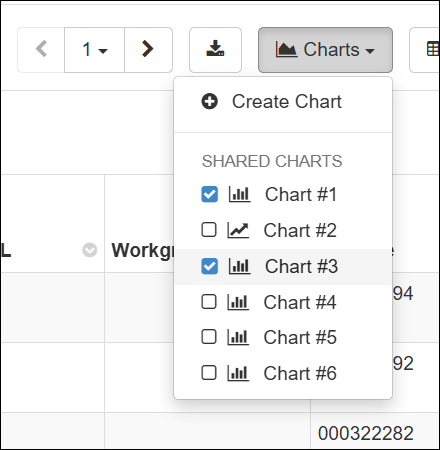

- Error bars are available on Bar and Line charts. (docs)

- Multiple charts can be displayed above data grids. Select up to 5 charts to display. (docs)

Release 25.10, October 2025

- Amounts and Units Changes - Amount and Unit fields are now enforced as a pair—both must be completed together or left empty. (docs)

- Required Fields in Workflow Jobs - Required fields in workflow jobs are now enforced during job creation, instead of during job completion. (docs)

- Identifying Fields - Administrators can now set up to 6 identifying fields. (docs)

Release 25.9, September 2025

- Improved Audit Logging Behavior - The LabKey Client APIs will now respect the audit level configured by the system to improve adherence to compliance and ease development. When both the system and API parameters specify an auditing level, the higher, more detailed level is applied. (docs)

- Several improvements were made to overall system reliability and performance.

Release 25.8, August 2025

- Improved ELN Editing: ELN editors now get faster feedback when pasting images into an ELN: files pasted into an ELN now fail immediately if they can't be loaded. SM-VAL-25.7-B (docs)

- The CheckedOut date/time stamp is now an available column in sample grids. SM-VAL-25.7-D

- You can now view all audit events for a transaction in one place. SM-VAL-25.8.A (docs)

- The audit log now records original file names when duplicates are automatically renamed. SM-VAL-25.8-B (docs)

Release 25.7, July 2025

- Improvements were made to address overall system reliability and performance.

- Continued investment in automated testing and internal quality checks to support ongoing feature development.

Release 25.7.8, September 2025

- Selection order is retained when editing in a grid. SM-VAL-25.7-A

- Improved ELN Editing: ELN editors now get faster feedback when pasting images into an ELN: files pasted into an ELN now fail immediately if they can't be loaded. SM-VAL-25.7-B (docs)

- Improved import feedback: When attachment fields are supplied with data in a file import or file update for Sources, users will be provided an error message that Attachment data cannot be provided via a file. SM-VAL-25.7-C

- The CheckedOut date/time stamp is now an available column in sample grids. SM-VAL-25.7-D (docs)

- We have addressed an issue with moving assay runs. Moving assay runs that have multiple file fields now associate correctly. SM-VAL-25.7-E

- We have addressed an issue with cross-sample-type import or cross-folder sample import, where the CheckedOut column was being ignored. SM-VAL-25.7-F

- We have addressed an issue with cross-sample-type import and cross-folder sample import, where Yes/No text fields were being inadvertently converted to Boolean values. SM-VAL-25.7-G

- We have addressed an issue where samples being removed from storage could not be assigned a Locked sample status type. SM-VAL-25.7-H

Release 25.6, June 2025

- Lineage details can be used in aliquot naming patterns. SM-VAL-25.6-A (docs)

- Users can enter a reason when they make changes to a Sample Type, Source Type, or Assay Design. SM-VAL-25.6-B (docs)

- Fields of type "Sample" can be set to validate that values already exist in the system. SM-VAL-25.6-C (docs)

- Several improvements were made to address overall system reliability and performance.

Release 25.5, May 2025

- Several improvements were made to address overall system reliability and performance.

- Continued investment in automated testing and internal quality checks to support ongoing feature development.

Release 25.4, April 2025

- Several improvements were made to address overall system reliability and performance.

- Continued investment in automated testing and internal quality checks to support ongoing feature development.

Release 25.3, March 2025

Maintenance Release 25.3.2, April 2025

- Bulk edit grids now show amounts as entered, regardless of selected units.

- Field names longer than 40 characters are now supported, though not recommended.

Release 25.3.0, March 2025

- You can now use numeric positions for boxes, plates, and tube racks (instead of xy coordinates) when that will better match your lab. SM-VAL-25.3-A (docs)

- Improved support for using special characters in column names and data. SM-VAL-25.3-B (docs)

- Updates to our content security policy (CSP) to enforce strong settings that will block serious cybersecurity threats. Administrators can add allowed external resources if needed. SM-VAL-25.3-C (docs)

Release 25.2, February 2025

- Assay transform scripts can be configured to run when data is imported, updated, or both. (docs)

- Use conditional formatting to selectively highlight field values in grids. (docs)

Release 25.1, January 2025

- A trendline option has been added to the chart builder for Line charts. (docs)

- Customize the downloadable template file for Samples, Sources, and Assay Designs. (docs)

Release 24.11, November 2024

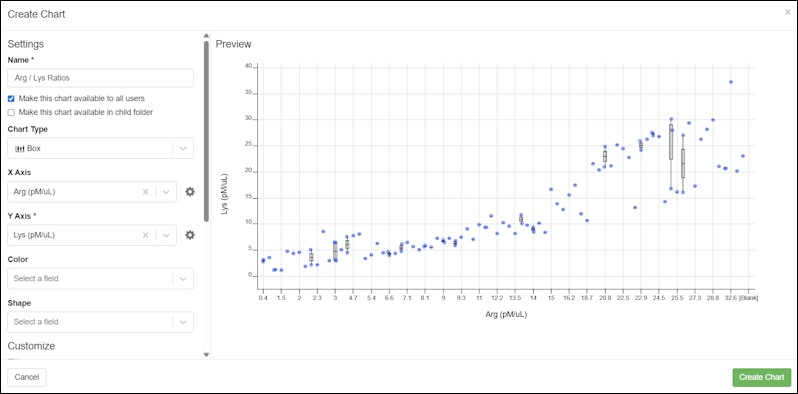

- The Chart Builder can be used from within the application to add and edit charts on grids. (docs)

Release 24.10, October 2024

Release Notes: Biologics LIMS

Biologics LIMS includes all features available in LabKey LIMS, plus:

- Bioregistry and classification engine

- Media management

- Molecule property calculator

- Plate and plate set support

- Antibody screening and characterization

Release 26.2, February 2026

- GenBank import improved: nearly all information GenBank files is captured on import, including the original file.

- Improved Molecule creation: Select protein sequences to kick off the molecule creation process.

- Column widths now adjust dynamically, allowing more columns to be visible at once with less horizontal scrolling. (docs)

- Configured URL links can now be opened in a new browser tab for easier comparison and multitasking. (docs)

- Entities you don't have access to in lineage views are now shown as restricted rather than being omitted, preserving full context without exposing details. (docs)

- Sample Status is available as a filter for "All Sample Types" in Sample Finder. (docs)

Release 26.1, January 2026

- Support for multiple unit types provides improved inventory and material management. (docs)

- Move workflow jobs to different folders to better reflect changes in projects or organization. (docs)

- Workflow tasks now support sample filters, allowing you to control which samples are included at each step. (docs)

- Improved plot customization with new layout, axis, size, color, and per-series line controls. (docs)

- Client APIs can query and update samples using the RowId value; using the LSID value is no longer required.

- Sample names (SampleId) can be updated via a file, when RowId is provided.

Release 25.12, December 2025

- Amount and Units Fields - Improvements have been made to ensure that the Amounts & Units fields function as paired fields. (docs)

- Negative Amount Values Disallowed - Sample Manager now enforces that the Amounts field cannot have a negative value.(docs)

- Identifying Fields - Identifying fields are now shown in more assay import scenarios. (docs)

Release 25.11, November 2025

- Audit log captures the method used to insert, update, and delete records. (docs)

- When an ELN notebook is recalled by an administrator, the author will now receive an email notification, improving visibility and timely follow-up.

- The Customize Grid View and Filter pop-up dialogs now list fields alphabetically, making it faster and more intuitive to find and select fields.

- Error bars are available on Bar and Line charts. (docs)

- Multiple charts can be displayed above data grids. Select up to 5 charts to display. (docs)

Release 25.3, March 2025

- Rapidly find the plates and experiments in which samples have been used, and vice versa.

- Automatically generate analytics like regressions and statistics to accelerate your work

Release 25.2, February 2025

- Support for advanced plate layouts using using dilutions.

- Use the "Replicate Group" column to denote a plate well as a replicate instead of setting the well's type to "Replicate".

- Replicate wells have a type of "Sample" and the "Replicate Group" will need to be filled in.

- Add Samples to an existing Plate Set.

- Navigate from a plate set to any notebooks that reference it.

- Edits to outlier exclusions will result in the rerunning of any transform scripts that are configured to run on update.

Release 25.1, January 2025

- Users can now specify hit selection filter criteria on Assay fields. When a run is imported/edited the hit selections for the assay results will be recomputed and automatically applied based on these criteria.

- Navigate from a sample to the plate(s) it has appeared on.

- Perform many types of linear regression analysis and chart them.

- Exclude outlier plate-based assay data points and have that reflected in calculations and charts.

Release 24.12, December 2024

- Plate sets can be referenced from an Electronic Lab Notebook.

Release 24.11, November 2024

Major antibody discovery and characterization updates including:- Campaign modeling with plate set hierarchy support.

- Plan plates easier with graphical plate design and templating.

- Automate routine analyses from raw data collected.

- Perform hit selection from multiple, integrated results across plates and data types.

- Generate instructions for liquid handlers and other instruments.

- Automatically integrate multi-plate results including interplate replicate aggregation.

- Dive deeper into plated materials to understand their characteristics and relationships from plates.

Release 24.10, October 2024

- Charts are added to LabKey LIMS, making them an "inherited" feature set from other product tiers. (docs)

Release 24.7, July 2024

- A new menu has been added for exporting a chart from a grid. (docs)

Release 23.12, December 2023

- The Molecule physical property calculator offers additional selection options and improved accuracy and ease of use. (docs)

Release 23.11, November 2023

Release 23.9, September 2023

- Charts, when available, are now rendered above grids instead of within a popup window. (docs)

Release 23.4, April 2023

- Molecular Physical Property Calculator is available for confirming and updating Molecule variations. (docs)

- Lineage relationships among custom registry sources can be represented. (docs)

- Users of the Enterprise Edition can track amounts and units for raw materials and mixture batches. (docs | docs)

Release 23.3, March 2023

- Potential Backwards Compatibility Issue: In 23.3, we added the materialExpDate field to support expiration dates for all samples. If you happen to have a custom field by that name, you should rename it prior to upgrading to avoid loss of data in that field.

- Note that the built in "expirationDate" field on Raw Materials and Batches will be renamed "MaterialExpDate". This change will be transparent to users as the new fields will still be labelled "Expiration Date".

Release 23.2, February 2023

- Protein Sequences can be reclassified and reannotated in cases where the original classification was incorrect or the system has evolved. (docs)

- Lookup views allow you to customize what users will see when selecting a value for a lookup field. (docs)

- Users of the Enterprise Edition may want to use this feature to enhance details shown to users in the "Raw Materials Used" dropdown for creating media batches. (docs)

Release 23.1, January 2023

- Heatmap and card views of the bioregistry, sample types, and assays have been removed.

- The term "Registry Source Types" is now used for categories of entity in the Bioregistry. (docs)

Release 22.12, December 2022

- Projects were added to the Professional Edition of Sample Manager, making this a common feature shared with other tiers.

Release 22.11, November 2022

- Improvements in the interface for managing Projects. (docs)

- New documentation:

Release 22.10, October 2022

- Improved interface for creating and managing Projects in Biologics. (docs)

Release 22.9, September 2022

- When exploring Media of interest, you can easily find and review any associated Notebooks from a panel on the Overview tab. (docs)

Release 22.8, August 2022

- Search for data across projects in Biologics. (docs)

Release 22.7, July 2022

- Biologics subfolders are now called 'Projects'; the ability to categorize notebooks now uses the term 'tags' instead of 'projects'. (docs | docs)

Release 22.6, June 2022

- New Compound Bioregistry type supports Simplified Molecular Input Line Entry System (SMILES) strings, their associated 2D structures, and calculated physical properties. (docs)

- Define and edit Bioregistry entity lineage. (docs)

- Bioregistry entities include a "Common Name" field. (docs)

Release 22.3, March 2022

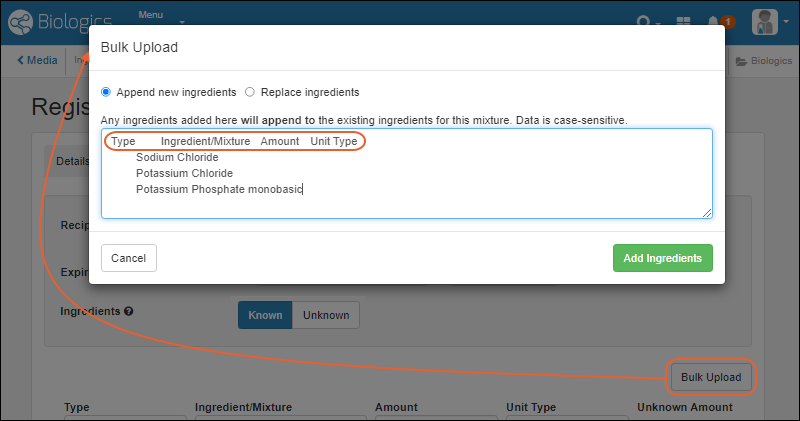

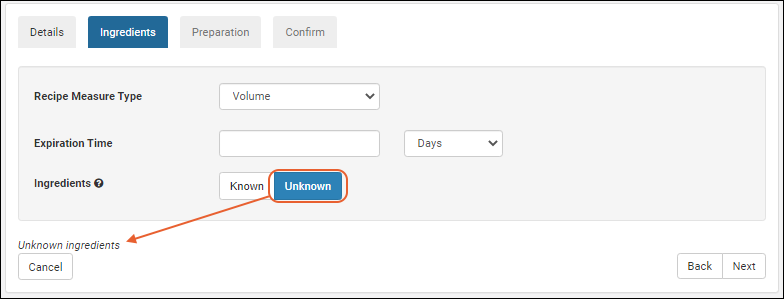

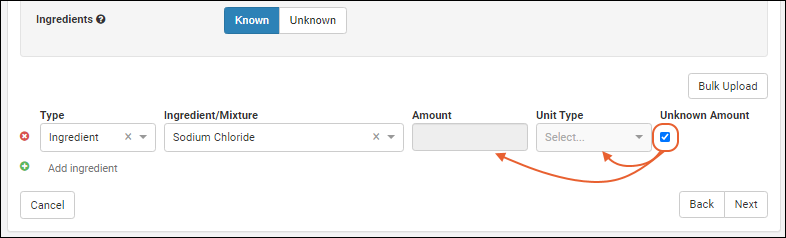

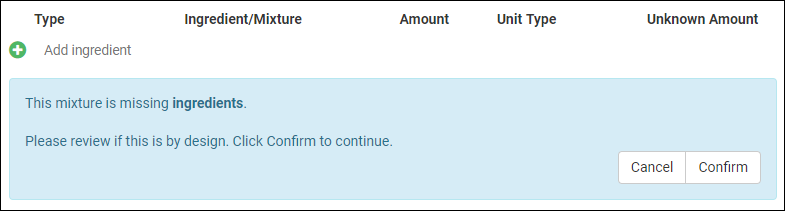

- Mixture import improvement: choose between replacing or appending ingredients added in bulk. (docs)

Release 21.12, December 2021

Release 21.11, November 2021

- Apply a container specific prefix to all bioregistry entity and sample naming patterns. (docs)

Release 21.10, October 2021

- Customize the definitions of data classes (both bioregistry and media types) within the application. (docs)

Release 21.9, September 2021

- Customize the names of entities in the bioregistry (docs)

Release 21.7, July 2021

- Removal of the previous "Experiment" mechanism. Use workflow jobs instead.

Release 21.5, May 2021

- Nucleotide and Protein Sequence values can be hidden from users who have access to read other data in the system. (docs)

Release 21.4, April 2021

- Specialty Assays can now be defined and integrated, in addition to Standard Assays (docs)

- Creation of Raw Materials in the application uses a consistent interface with other sample type creation (docs)

Release 21.3, March 2021

- Biologics LIMS begins using the same user interface as Sample Manager.

- Release notes for this and other versions prior to this change can be found in the documentation archives.

Release 17.1, March 2017

Sample Manager & LIMS Validated

Overview

Software validation is the process of ensuring that a software system meets its intended use and performs reliably within its operational environment. For regulated industries, validation provides documented evidence that the system consistently produces results that meet predetermined specifications and compliance requirements. Validation of Sample Manager/LabKey LIMS is an optional add-on to Sample Manager Professional Edition or LabKey LIMS and performed by our third-party partner, CompliancePath. The Validation Pack is updated with each ESR release of Sample Manager/LabKey LIMS and includes Installation Qualification (IQ) and Operational Qualification (OQ) documentation.This page will be updated with the most recent version of the release schedule.25.11.8 Sample Manager/LabKey LIMS Validated Release Schedule

Please refer to this schedule for the 25.11.8 validated release of Sample Manager/LabKey LIMS.- Week of January 19: Upgrade test environments to early access release of 25.11.8

- Jan 26 : LKSM Validation Pack Released (Originally scheduled for Feb 6)

- Feb 18: Target release date for LIMS Validation Pack (previously scheduled for Feb 13)

- Upgrade of production environments to 25.11.8

- Asia/Oceania - Feb 10 5:30-7:00am AEDT

- Africa/Europe - Feb 09 6:30-8:00pm GMT

- Americas - Feb 09 6:30-8:00pm EST

If you have any concerns, please contact us at help@labkey.com.

Last updated: 2026-02-05

Sample Manager & LabKey LIMS Software Validation & Release Schedule - General

This is the generalized release schedule for LabKey Sample Manager & LIMS validationPhase 1: Validation Planning and Initial Updates

Release: YY.MM.00Goal: Align on validation scope and begin valpack updates.

- Week 0: Kickoff: LabKey + CompliancePath meet to discuss approach, timelines, and scope.

- Week 1: LabKey upgrades CompliancePath server (pre-work for validation updates).

- Weeks 1-4: CompliancePath begins updating Validation Pack (URS, Risk Assessment, OQ updates).

Phase 2: Customer PQ Preparation & Staging Environment Updates

Releases: YY.MM.02 – YY.MM.06Goal: Prepare customers for PQ by upgrading test environments and communicating schedule.

- Weeks 2-6: LabKey sends communications to customers:

- Release notes for YY.MM.02–YY.MM.06

- Validation timeline & expectations

- Staging upgrade windows

- Rough plans for production upgrades

- Weeks 2-6: LabKey upgrades customer testing/staging environments.

Phase 3: Validation Pack Release & Production Upgrades

Release: YY.MM.08Goal: Deliver final validation materials and complete production rollout.

- Week 8: LabKey upgrades CompliancePath and customer testing environments to YY.MM.08.

- Weeks 9-10: CompliancePath executes OQ testing and finalizes the Validation Pack updates.

- Week 10: CompliancePath releases the completed Validation Pack to LabKey and customers.

- Week 11: LabKey upgrades customer production environments to YY.MM.08.

Get Started with Sample Manager

Welcome to LabKey Sample Manager

The resources linked here will help you get started using LabKey Sample Manager for sample tracking. First, complete the steps in the Exploring Sample Manager guide to learn to add sample information, define lineage, and understand the processes you will follow to find and use your data.Getting Started, Step by Step

More Video Overviews and Demos

Each will open in a new tab.- Upgrade your lab from spreadsheets to Sample Manager

- Spring cleaning with Sample Manager

- See how Sample Manager can level-up your lab work

- Getting started with Sample Manager

Configure Freezers and Other Storage

Matching your physical storage to virtual storage locations in the app can make it easy to know where to find the samples you need.Add Sample Inventories

Getting your sample information loaded is the heart of using Sample Manager. Define the structure of the data to describe each "type" of sample in your system. Once the types are defined, the samples can be created within the application or imported from a spreadsheet.- Create a Sample Type

- Add Samples

- Import Samples from File

- Import Samples with Storage Locations

- Import Samples with Lineage

Associate Sources

If your samples have physical or biological sources that you want to track, you can learn about adding them and associating them with samples in these topics:Define Assays (Premium Feature)

The data you obtain from running instrument tests on your samples will be uploaded as an assay to LabKey Sample Manager. These topics will guide you in designing assays and uploading your data.Outline Workflow (Premium Feature)

Your laboratory workflow can be managed by creating workflow jobs for the sequences of tasks your team performs. Add your users, set permissions, and organize your jobs and templates following these topics:Use Electronic Lab Notebooks (Premium Feature)

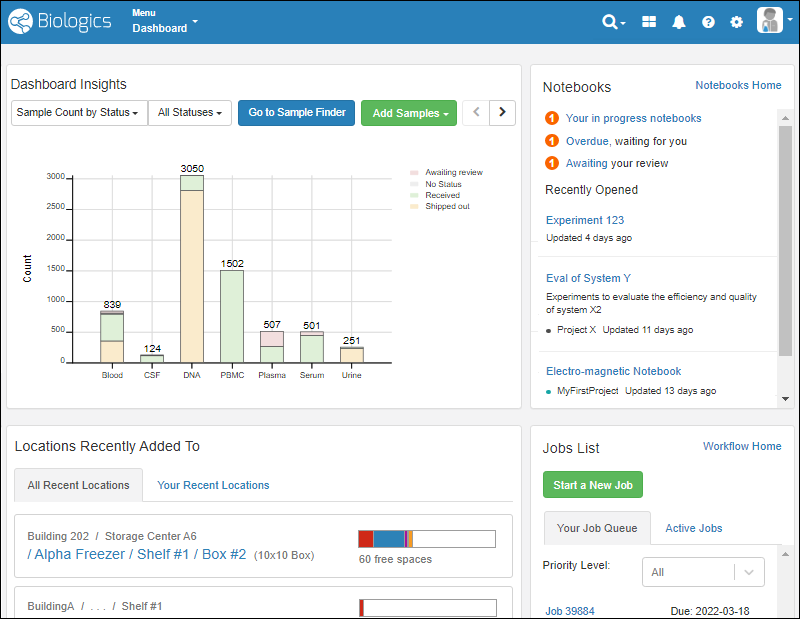

Collaborate and record your work in data-connected Electronic Lab Notebooks. Use templates to create many similar notebooks, and manage individual notebooks through a review and signing process.Learn more in this section:Sample Manager Dashboard

- Release Announcement Banner

- Dashboard Insights

- Sample Finder

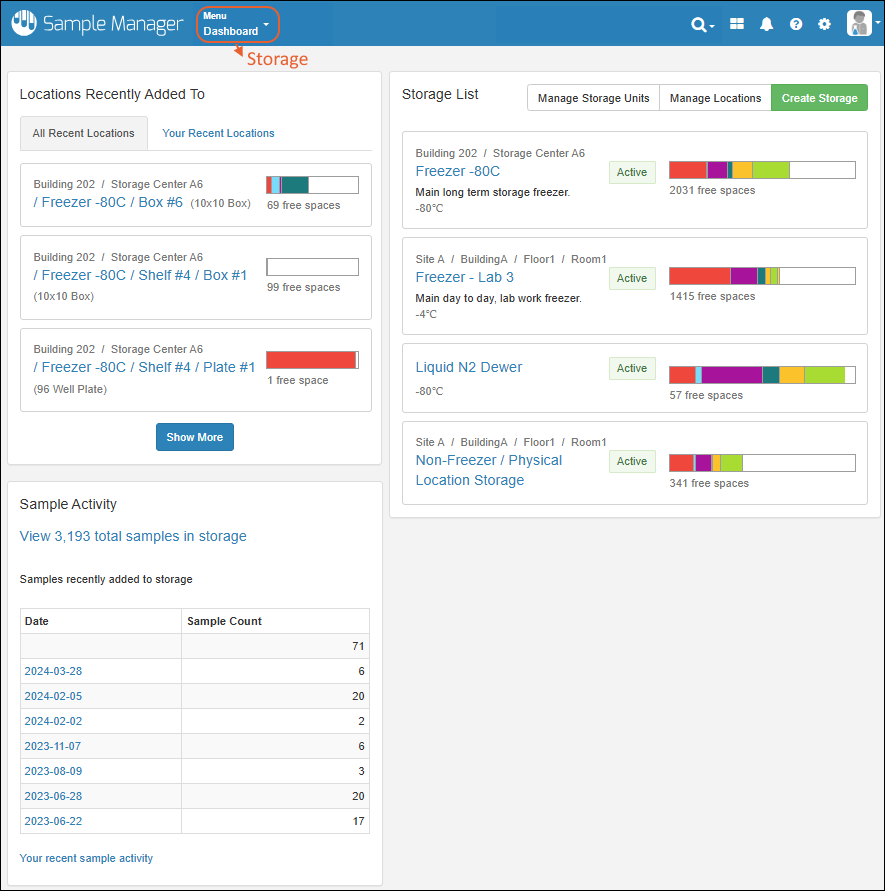

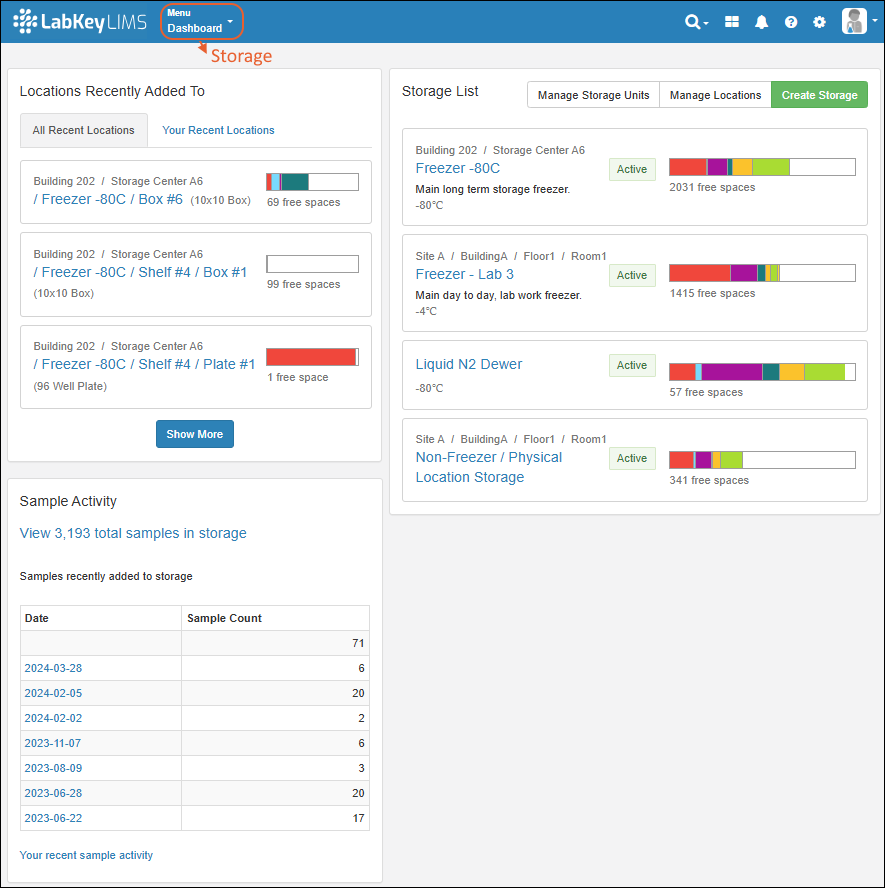

- Locations Recently Added To

- Main Menu

- Header Menus

- Notebooks Available in the Professional Edition of Sample Manager.

- Jobs List Available in the Professional Edition of Sample Manager.

Release Announcement Banner

At the top of the dashboard, you'll see a banner announcing the latest release. Click the text "See what's new" to link to the release notes.Dashboard Insights

See the current status of the system, with several display options. By default, you see the total count of samples of each Sample Type, shaded by the label color you assign.- Sample Count by Status (Default)

- Sample Count by Sample Type

- Assay Run Count by Assay (Professional Edition Feature)

- Sample Count by Status offers:

- All Statuses

- With a Status

- No Status

- Sample Count by Sample Type -or- Assay run Count by Assay offer the timeframe over which the count of samples or assays is determined. Options:

- All

- In the Last Year

- In the Last Month

- In the Last Week

- Today

Exclude Sample Types from Dashboard Insights

If desired, an administrator can selectively exclude selected Sample Types from the Dashboard Insights panel. For example, if you have one "static" set of inventory items in your repository, but these never change, you may be interested in hiding them to make the Insights panel more usable for the types in active use.Select > Application Settings and scroll down to the Dashboard section. Note that if you are using Folders in Sample Manager, this option is on the Folders tab. In the Dashboard section, you can uncheck any Sample Types that you wish to have excluded from the Sample Insights dashboard for this folder.Sample Finder

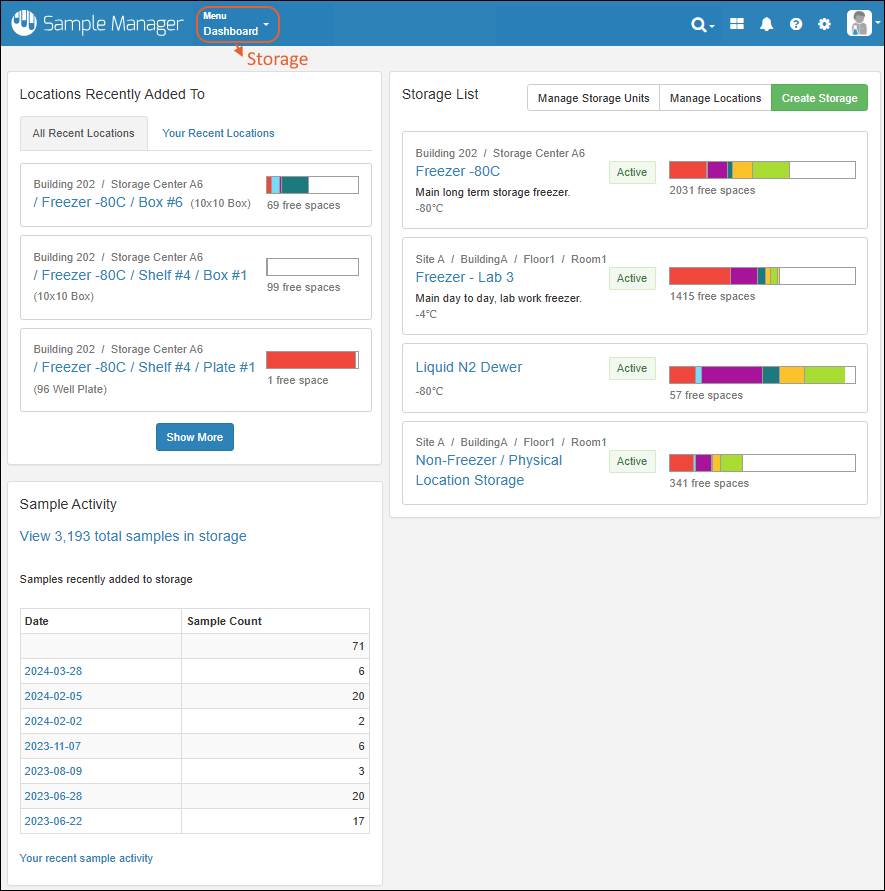

Click Go to Sample Finder in the center of the dashboard to search for samples by properties of their parents and sources. Learn more in this topic:Locations Recently Added To

Storage is shown on the dashboard using a Locations Recently Added To widget.- The "All Recent Locations" tab is the default, showing storage locations that have had samples added to them most recently.

- You can also choose "Your Recent Locations" to see where you last added samples, giving you a quick way to jump back to where you were.

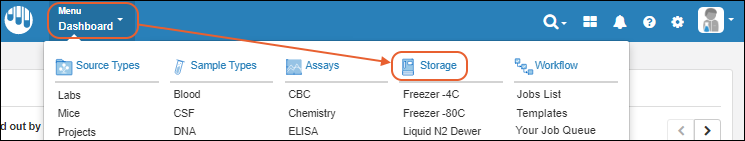

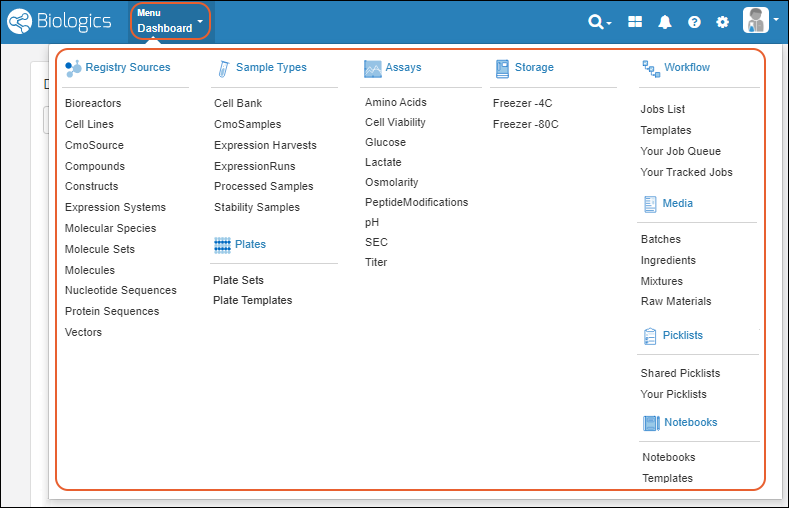

Main Menu

Throughout the application, you can access a master menu providing quick links to everything in the system.- Source Types

- Sample Types

- Storage

- Picklists

- Assays Available in the Professional Edition of Sample Manager.

- Workflow Available in the Professional Edition of Sample Manager.

- Notebooks Available in the Professional Edition of Sample Manager.

Header Menus

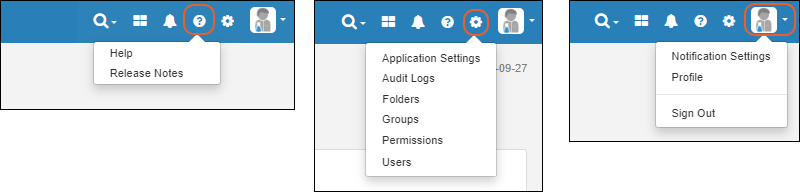

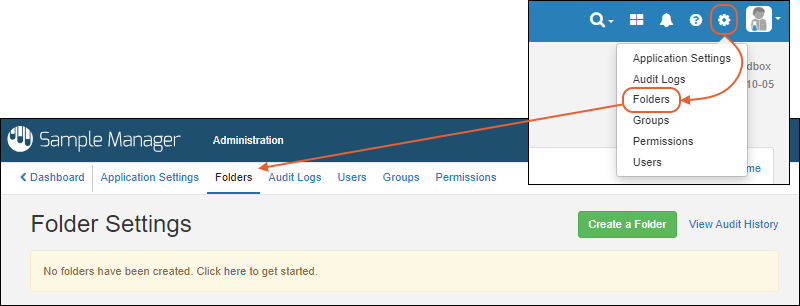

In the upper right, menus provide quick access to resources and actions.

- : Search

- : Product Selection Shown when using a Premium Edition of LabKey Server.

- : Notifications

- : Documentation

- Help Opens the documentation home page for the application.

- Release Notes

- : Administration Settings

- Application Settings: Includes Display Settings, Notebook Settings, BarTender Configuration, ID/Name Settings, and Manage Sample Statuses.

- Audit Logs