This topic explains how to best prepare your data for import so you can meet any requirements set up by the target data structure.

LabKey Server provides a variety of different data structures for different uses: Assay Designs for capturing instrument data, Datasets for integrating heterogeneous clinical data, Lists for general tabular data, etc. Some of these data structures place strong constraints on the nature of the data to be imported, for example Datasets make uniqueness constraints on the data; other data structures, such as Lists, make few assumptions about incoming data.

Design Choices: Column Names and Field Types

Choose Column Data Types

When deciding how to import your data, consider the data type of columns to match your current and future needs. Considerations include:

- Available Types: Review the list at the top of this topic.

- Type Conversions are possible after data is entered, but only within certain compatibilities. See the table of available type changes here.

- Number type notes:

- Integer: A 4-byte signed integer that can hold values ranging -2,147,483,648 to +2,147,483,647.

- Decimal (Floating Point): An 8-byte double precision floating point number that can hold very large and very small values. Values can range approximately 1E-307 to 1E+308 with a precision of at least 15 digits. As with most standard floating point representations, some values cannot be converted exactly and are stored as approximations. It is often helpful to set on Decimal fields a display format that specifies a fixed or maximum number of decimal places to avoid displaying approximate values.

General Advice: Avoid Mixed Data Types in a Column

LabKey tables (Lists, Datasets, etc.) are implemented as

database tables. So your data should be prepared for insertion into a database. Most importantly, each column should conform to a database data type, such as Text, Integer, Decimal, etc. Mixed data in a column will be rejected when you try to upload it.

WrongThe following table mixes Boolean and String data in a single column.

| ParticipantId | Preexisting Condition |

|---|

| P-100 | True, Edema |

| P-200 | False |

| P-300 | True, Anemia |

Right Split out the mixed data into separate columns

| ParticipantId | Preexisting Condition | Condition Name |

|---|

| P-100 | True | Edema |

| P-200 | False | |

| P-300 | True | Anemia |

General Advice: Avoid Special Characters in Column Names

Column names should avoid special characters such as !, @, #, $, etc. We also recommend using underscores instead of spaces, newlines, and any other "separator" characters. Column names should contain only letters, numbers, spaces, and underscores; and should begin only with a letter or underscore.

WrongThe following table has special characters in the column names.

| Participant # | Preexisting Condition? |

|---|

| P-100 | True |

| P-200 | False |

| P-300 | True |

RightThe following table removes the special characters and replaces spaces with underscores.

| Participant_Number | Preexisting_Condition |

|---|

| P-100 | True |

| P-200 | False |

| P-300 | True |

You can provide a separate

Label for a column name that includes special characters, or include a

Description for a longer hovertext tooltip if you need to provide additional information on column headers. Learn more in this topic:

Data Column Aliasing

Use data column aliasing to work with non-conforming data, meaning the provided data has different columns names or different value ids for the same underlying thing. Examples include:

- A lab provides assay data which uses different participant ids than those used in your study. Using different participant ids is often desirable and intentional, as it provides a layer of PHI protection for the lab and the study.

- Excel files have different column names for the same data, for example some files have the column "Immune Rating" and other have the column "Immune Score". You can define an arbitrary number of these import aliases to map to the same column in LabKey.

- The source files have a variety of names for the same visit id, for example, "M1", "Milestone #1", and "Visit 1".

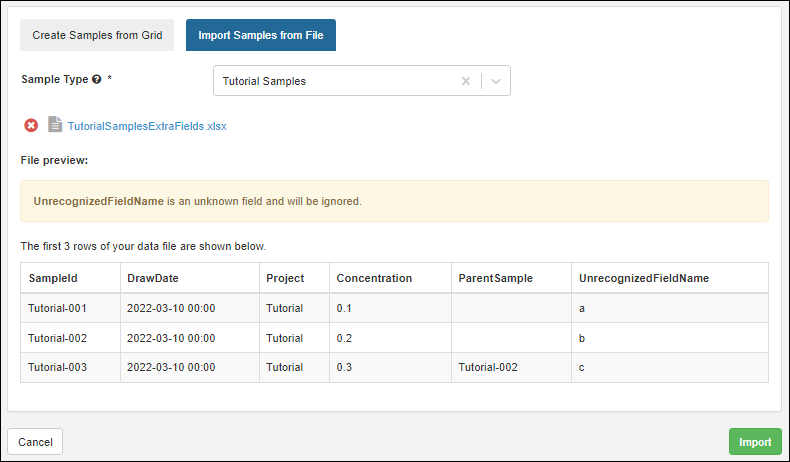

Import to Unrecognized Fields

When importing data, if there are unrecognized fields in your spreadsheet, meaning fields that are not included in the data structure definition, they will be ignored. In some situations, such as when importing sample data, you will see a warning banner explaining that this is happening:

Data Format Considerations

Handling Backslash Characters

If you have a TSV or CSV file that contains backslashes in any text field, the upload will likely ignore the backslash and either substitute it for another value or ignore it completely. This occurs because the server treats backslashes as escape characters, for example, \n will insert a new line, \t will insert a tab, etc.

To import text fields that contain backslashes, wrap the values in quotes, either by manual edit or by changing the save settings in your editor. For example:

should be:

Note that this does not apply to importing Excel files. Excel imports are handled differently, and text data within the cell is processed such that text is in quotes.

Clinical Dataset Details

Datasets are intended to capture measurements events on some subject, like a blood pressure measurement or a viral count at some point it time. So datasets are required to have two columns:

- a subject id

- a time point (either in the form of a date or a number)

Also, a subject cannot have two different blood pressure readings at a given point in time, so datasets reflect this fact by having uniqueness constraints: each record in a dataset must have a unique combination of subject id plus a time point.

WrongThe following dataset has duplicate subject id / timepoint combinations.

| ParticipantId | Date | SystolicBloodPressure |

|---|

| P-100 | 1/1/2000 | 120 |

| P-100 | 1/1/2000 | 105 |

| P-100 | 2/2/2000 | 110 |

| P-200 | 1/1/2000 | 90 |

| P-200 | 2/2/2000 | 95 |

RightThe following table removes the duplicate row.

| ParticipantId | Date | SystolicBloodPressure |

|---|

| P-100 | 1/1/2000 | 120 |

| P-100 | 2/2/2000 | 110 |

| P-200 | 1/1/2000 | 90 |

| P-200 | 2/2/2000 | 95 |

Demographic Dataset Details

Demographic datasets have all of the constraints of clinical datasets, plus one more: a given subject identifier cannot appear twice in a demographic dataset.

WrongThe following demographic dataset has a duplicate subject id.

| ParticipantId | Date | Gender |

|---|

| P-100 | 1/1/2000 | M |

| P-100 | 1/1/2000 | M |

| P-200 | 1/1/2000 | F |

| P-300 | 1/1/2000 | F |

| P-400 | 1/1/2000 | M |

RightThe following table removes the duplicate row.

| ParticipantId | Date | Gender |

|---|

| P-100 | 1/1/2000 | M |

| P-200 | 1/1/2000 | F |

| P-300 | 1/1/2000 | F |

| P-400 | 1/1/2000 | M |

Date Parsing Considerations

Whether to parse user-entered or imported date values as Month-Day-Year (as typical in the U.S.) or Day-Month-Year (as typical outside the U.S.) is set at the

site level. For example, 11/7/2020 is either July 11 or November 7; a value like 11/15/2020 is only valid Month-Day-Year (US parsing).

If you attempt to import date values with the "wrong" format, they will be interpreted as strings, so you will see errors similar to ""Could not convert value '11/15/20' (String) for Timestamp field 'FieldName'."

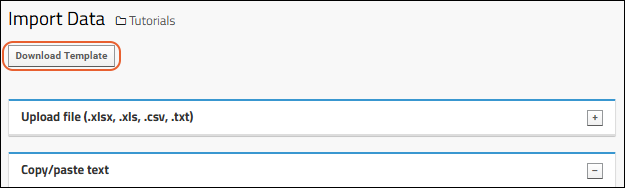

Use Import Templates

For the most reliable method of importing data, first obtain a template for the data you are importing. Most data structures will include a

Download Template button when you select any bulk import method, such as importing from a file.

Use the downloaded template as a basis for your import file. It will include all possible columns and will exclude unnecessary ones. You may not need to populate every column of the template when you import data.

Premium Feature AvailableSubscribers to Sample Manager, LabKey LIMS, and Biologics LIMS have access to download templates in even more places. Learn more in this Sample Manager documentation topic:

Learn more about Sample Manager here

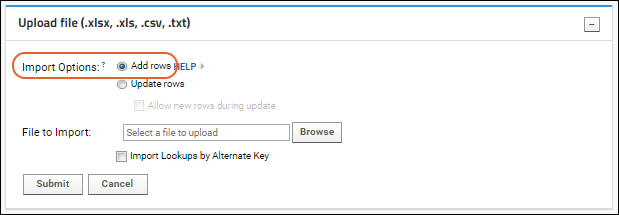

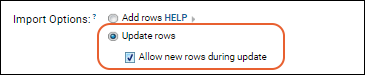

Import Options: Add, Update and Merge Data

When bulk importing data (via

> Import bulk data) the default

Import Option is

Add rows, for adding new rows only. If you include data for existing rows, the import will fail.

To

update data for existing rows, select the option to

Update rows. Note that update is not supported for Lists with auto-incrementing integer keys or Datasets with system-managed third keys.

If you want to

merge existing data updates with new rows, select

Update rows, then check the box to

Allow new rows during update. Note that merge is not supported for Lists with auto-incrementing integer keys or Datasets with system-managed third keys.

Learn more about updating and merging data in these topics:

Data Import Previews

In some contexts, such as

creating a list definition and populating it at the same time and

importing samples from file into Sample Manager or LabKey Biologics, you will see a few lines "previewing" the data you are importing.

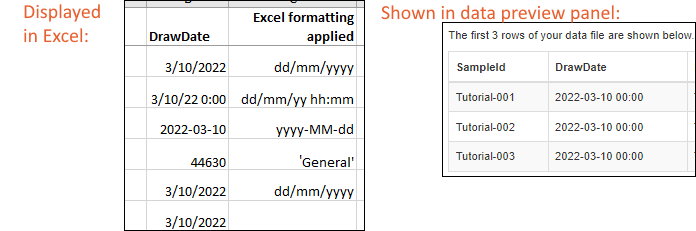

These data previews shown in the user interface do not apply field formatting from either the source spreadsheet or the destination data structure.

In particular, when you are importing Date and DateTime fields, they are always previewed in ISO format (yyyy-MM-dd hh:mm) regardless of source or destination formatting. The Excel format setting is used to infer that this is a date column, but is not carried into the previewer or the imported data.

For example, if you are looking at a spreadsheet in Excel, you may see the value with specific date formatting applied, but this is not how Excel actually stores the date value in the field. In the image below, the same 'DrawDate' value is shown with different Excel formatting applied. Date values are a numeric offset from a built-in start date. To see the underlying value stored, you can view the cell in Excel with 'General' formatting applied (as shown in the fourth line of the image below), though note that 'General' formatted cells will not be interpreted as date fields by LabKey. LabKey uses any 'Date' formatting to determine that the field is of type 'DateTime', but then all date values are shown in ISO format in the data previewer, i.e. here "2022-03-10 00:00".

After import,

display format settings on LabKey Server will be used to display the value as you intend.

OOXML Documents Not Supported

Excel files saved in the "Strict Open XML Spreadsheet" format will generate an .xlsx file, however this format is not supported by POI, the library LabKey uses for parsing .xlsx files. When you attempt to import this format into LabKey, you will see the error:

There was a problem loading the data file. Unable to open file as an Excel document. "Strict Open XML Spreadsheet" versions of .xlsx files are not supported.

As an alternative, open such files in Excel and save as the ordinary "Excel Workbook" format.

Learn more about this lack of support in

this Apache issue.

Related Topics