The Document Abstraction (or Annotation) Workflow supports the movement and tracking of documents through the following general process. All steps are optional for any given document and each part of the workflow may be configured to suit your specific needs:

Workflow

Different types of documents (for example, Pathology Reports and Cytogenetics Reports) can be processed through the same workflow, task list and assignment process, each using abstraction algorithms specific to the type of document. The assignment process itself can also be customized based on the type of disease discussed in the document.

Topics

Roles and Tasks

- NLP/Abstraction Administrator:

- Configure terminology and set module properties.

- Review list of documents ready for abstraction.

- Make assignments of roles and tasks to others.

- Manage project groups corresponding to the expected disease groups and document types.

- Create document processing configurations.

- Abstractor:

- Choose a document to abstract from individual list of assignments.

- Abstract document. You can save and return to continue work in progress if needed.

- Submit abstraction for review - or approval if no reviewer is assigned.

- Reviewer:

- Review list of documents ready for review.

- Review abstraction results; compare results from multiple abstractors if provided.

- Mark document as ready to progress to the next stage - either approve or reject.

- Review and potentially edit previously approved abstraction results.

It is important to note that documents to be abstracted may well contain protected health information (PHI). Protection of PHI is strictly managed by LabKey Server, and with the addition of the nlp_premium, compliance, and complianceActivites modules, all access to documents, task lists, etc, containing PHI can be gated by permissions and also subject to approval of terms of use specific to the user's intended activity. Further, all access that is granted, including viewing, abstracting, and reviewing can be logged for audit or other review.

All sample screenshots and information shown in this documentation are fictitious.

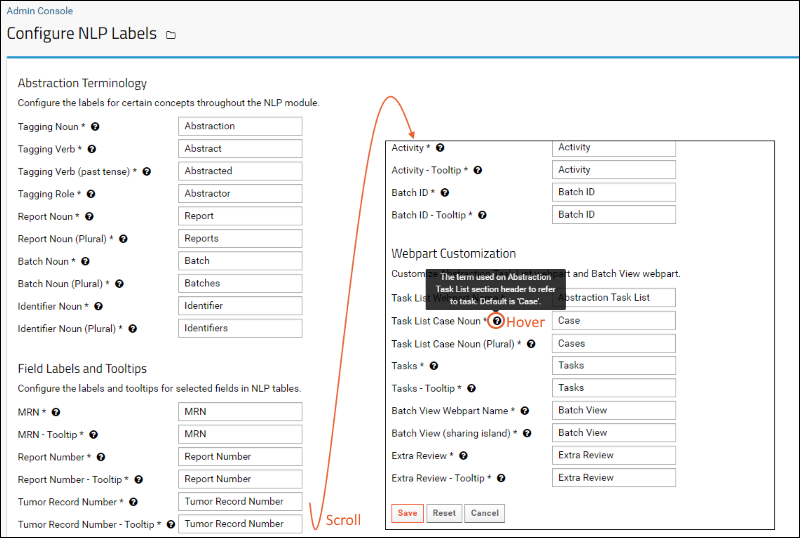

Configuring Terminology

The terminology used in the abstraction user interface can be customized to suit the specific words used in the implementation. Even the word "Abstraction" can be replaced with something your users are used to, such as "Annotation" or "Extraction." Changes to NLP terminology apply to your entire site.

An Abstraction Administrator can configure the terminology used as follows:

- Select > Site > Admin Console.

- Under Premium Features, click NLP Labels.

- The default labels for concepts, fields, tooltips, and web part customization are listed.

- There may be additional customizable terms shown, depending on your implementation.

- Hover over any icon for more information about any term.

- Enter new terminology as needed, then click Save.

- To revert all terms to the original defaults, click Reset.

Terms you can customize:

- The concept of tagging or extracting tabular data from free text documents (examples: abstraction, annotation) and name for user(s) who do this work.

- Subject identifier (examples: MRN, Patient ID)

- What is the item being abstracted (examples: document, case, report)

- The document type, source, identifier, activity

The new terms you configure will be used in web parts, data regions, and UIs; in some cases admins will still see the "default" names, such as when adding new web parts.

To facilitate helping your users, you can also customize the tooltips that will be shown for the fields to use your local terminology.

Abstraction Workflow

Documents are first uploaded, then assigned, then pass through the many options in the abstraction workflow until completion.

The document itself passes through a series of states within the process:

- Ready for assignment: when automatic abstraction is complete, automatic assignment was not completed, or reviewer requests re-abstraction

- Ready for manual abstraction: once an abstractor is assigned

- Ready for review: when abstraction is complete, if a reviewer is assigned

- (optional) Ready for reprocessing: if requested by the reviewer

- Approved

- If Additional Review is requested, an approved abstraction result is sent for secondary review to a new reviewer.

Passage of a document through these stages can be done using a BPMN (business process management) workflow engine. LabKey Server uses an

Activiti Workflow to automatically advance the document to the correct state upon completion of the prior state. Users assigned as abstractors and reviewers can see lists of tasks assigned to them and mark them as completed when done.

Assignment

Following the upload of the document and any provided metadata or automatic abstraction results, many documents will also be assigned for manual abstraction. The manual abstractor begins with any information garnered automatically and validates, corrects, and adds additional information to the abstracted results.

The assignment of documents to individual abstractors may be done automatically or manually by an administrator. An administrator can also choose to bypass the abstraction step by unassigning the manual abstractor, immediately forwarding the document to the review phase.

The

Abstraction Task List web part is typically included in the dashboard for any NLP project, and shows each viewing user a tailored view of the particular tasks they are to complete. Typically a user will have only one type of task to perform, but if they play different roles, such as for different document types, they will see multiple lists.

Abstraction

The assigned user completes a manual document abstraction following the steps outlined here:

Review

Once abstraction is complete, the document is "ready for review" (if a reviewer is assigned) and the task moves to the assigned reviewer. If the administrator chooses to bypass the review step, they can leave the reviewer task unassigned for that document.

Reviewers select their tasks from their personalized task list, but can also see other cases on the

All Tasks list. In addition to reviewing new abstractions, they can review and potentially reject previously approved abstraction results. Abstraction administrators may also perform this second level review. A rejected document is returned for additional steps as described in the table

here.

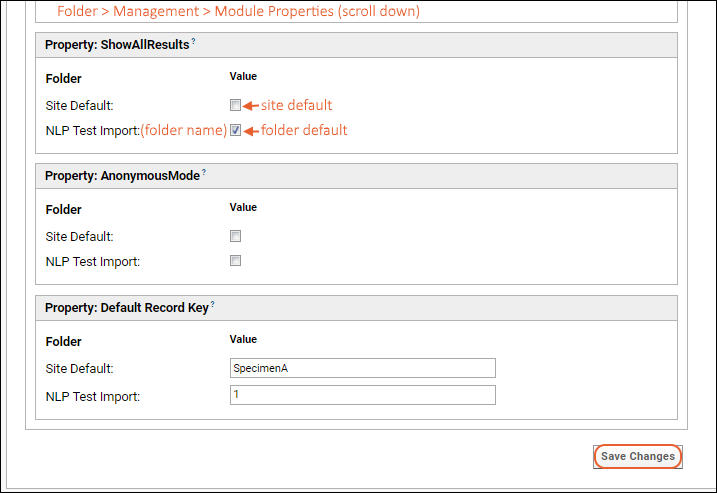

Setting Module Properties

Module properties are provided for customizing the behavior of the abstraction workflow process, particularly the experience for abstraction reviewers. Module properties, also used in

configuring NLP engines, can have both a site default setting and an overriding project level setting as needed.

- ShowAllResults: Check the box to show all sets of selected values to reviewers. When unchecked, only the first set of results are shown.

- AnonymousMode: Check to show reviewers the column of abstracted information without the userID of the individual abstractor. When unchecked, the name of the abstractor is shown.

- Default Record Key: The default grouping name to use in the UI for signaling which record (e.g. specimen or finding) the field value relates to. Provide the name your users will expect to give to subtables. Only applicable when the groupings level is at 'recordkey'. If no value is specified, the default is "SpecimenA" but other values like "1" might be used instead.

- To set module properties, select > Folder > Management and click the Module Properties tab.

- Scroll down to see and set the properties relevant to the abstraction review process:

Developer Note: Retrieving Approved Data via API

The client API can be used to retrieve information about imported documents and results. However, the task status is not stored directly, rather it is calculated at render time when displaying task status. When querying to select the "status" of a document, such as "Ready For Review" or "Approved," the reportId must be provided in addition to the taskKey. For example, a query like the following will return the expected calculated status value:

SELECT reportId, taskKey FROM Report WHERE ReportId = [remainder of the query]

Related Topics