You can include a "polling schedule" to check the source database for new data and automatically run the ETL process when new data is found. Either specify a time interval, or use a full cron expression to schedule the frequency with which your ETL should run. When choosing a schedule for running ETLs, consider the timing of other processes, like automated backups, which could cause conflicts with running your ETL.

Once an ETL contains a <schedule> element, the running of the ETL on that schedule can be enabled and disabled. An ETL containing a schedule may also be run on demand when needed.

Define Schedule for an ETL

The details of the schedule and whether the ETL runs on that schedule are defined independently.

In the XML definition of the ETL, you can include a schedule expression following one of the

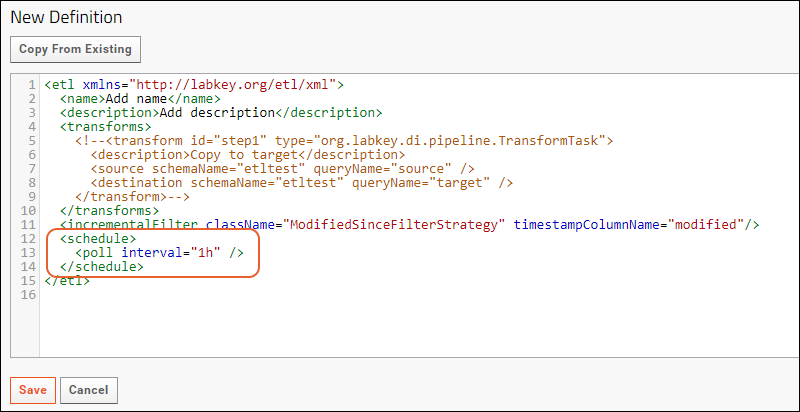

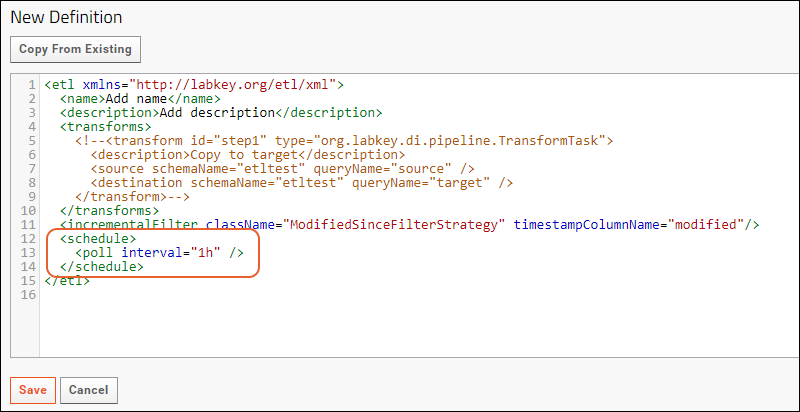

patterns listed below. If you are defining your ETL in a module, include the <schedule> element in the XML file. If you are defining your ETL in the UI, use this process:

- Select > Folder > Management. Click the ETLs tab.

- Click (Insert new row).

- The placeholder ETL shows where the <schedule> element is placed (and includes a "1h" default schedule).

- Customize the ETL definition in the XML panel and click Save.

Schedule Syntax Examples

You can specify a

time interval (poll), or use a full

cron expression to schedule the frequency or timing of running your ETL. Cron expressions consist of six or seven space separated strings for the seconds, minutes, hours, day-of-month, month, day-of-week, and optional year in that order. The wildcard '*' indicates every valid value. The character '?' is used in the day-of-month or day-of-week field to mean 'no specific value,' i.e, when the other value is used to define the days to run the job.

To assist you, use a builder for the Quartz cron format. One is available here:

https://www.freeformatter.com/cron-expression-generator-quartz.html.

More detail about cron syntax is available on the Quartz site:

The following examples illustrate the flexibility you can gain from these options. It is good practice to include a plain text comment clarifying the behavior of the schedule you set, particularly for cron expressions.

Interval - 1 Hour

...

<schedule><poll interval="1h"></poll></schedule> <!-- run every hour -->

...

Interval - 5 Minutes

...

<schedule><poll interval="5m" /></schedule> <!-- run every 5 minutes -->

...

Cron - Every hour on the hour

...

<schedule><cron expression="0 0 * ? * *" /></schedule> <!-- run every hour on the hour -->

...

Cron - Daily at Midnight

...

<schedule><cron expression="0 0 0 * * ?" /></schedule> <!-- run at midnight every day -->

...

Cron - Daily at 10:15am

...

<schedule><cron expression="0 15 10 ? * *"/></schedule> <!-- run at 10:15 every day -->

...

Cron - Every Tuesday and Thursday at 3:30 pm

...

<schedule><cron expression="0 30 15 ? * TUE,THU *"/></schedule> <!-- run on Tuesdays and Thursdays at 3:30 pm -->

...

Enable/Disable Running an ETL on a Schedule

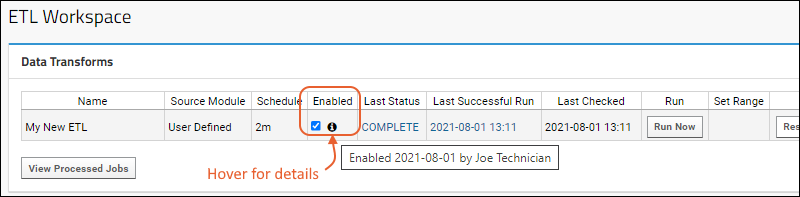

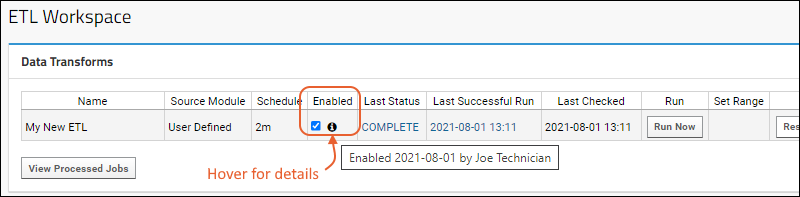

To enable the running of an ETL on its defined schedule, use the checkbox in the user interface, available in the

Data Transforms web part or by accessing the module directly:

- Select > Go To Module > Data Integration.

- Check the box in the Enabled column to run that ETL on the schedule defined for it.

- The ETL will now run on schedule as the user who checked the box. To change this ownership later, uncheck and recheck the checkbox as the new user. Learn more in this topic.

- When unchecked (the default) the ETL will not be run on it's schedule.

- You can still manually run ETLs whose schedules are not enabled.

- You can also disable scheduled ETLs by editing to remove the <schedule> statement entirely.

Learn more about enabling and disabling ETLs in this topic:

ETL: User Interface

Scheduling and Sequencing

If ETLs must run in a particular order, it is recommended to put them as multiple steps in another ETL to ensure the order of execution. This can be done in either of the following ways:

It can be tempting to try to use scheduling to ensure ETLs will run in a certain sequence, but if checking the source database involves a long running query or many ETLs are scheduled for the same time, there can be a delay between the scheduled time and the time the ETL job is placed in the pipeline queue, corresponding to the database response. It's possible this could result in an execution order inconsistent with the chronological order of closely scheduled ETLs.

Troubleshoot Unsupported or Invalid Schedule Configuration

An ETL schedule must be both valid and supported by the server. As an example, you CANNOT include two cron expressions separated by a comma such as in this example attempting to run on both the 2nd and fourth Mondays:

<cron expression="0 30 15 ? * MON#2, 0 30 15 ? * MON#4"/>

If an invalid schedule is configured, the ETL be unable to run. You will see an error message when saving, and should pay close attention as even with a bad schedule configuration, the ETL will still be saved.

If the schedule is not corrected before the next server restart, the server itself may fail to restart with exception error messages similar to:

org.labkey.api.util.ConfigurationException: Based on configured schedule, the given trigger 'org.labkey.di.pipeline.ETLManager.222/{DataIntegration}/User_Defined_EtlDefId_36' will never fire.

...

org.quartz.SchedulerException: Based on configured schedule, the given trigger 'org.labkey.di.pipeline.ETLManager.222/{DataIntegration}/User_Defined_EtlDefId_36' will never fire.Use the details in the log to locate and fix the schedule for the ETL.

Related Topics