This topic covers logging and error handling options for ETLs.

Log Files

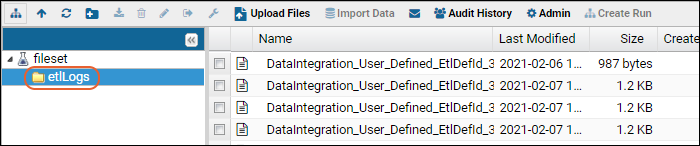

Messages and/or errors inside an ETL job are written to a log file named for that job, and saved in the File Repository, available at

> Go To Module > FileContent. In the File Repository, individual logs files are located in the folder named

etlLogs.

The absolute paths to these logs follows the pattern below. (ETLs defined in the user interface have an ETLNAME of "DataIntegration_User_Defined_EtlDefID_#"):

<LABKEY_HOME>/files/PROJECT/FOLDER_PATH/@files/etlLogs/ETLNAME_DATE.etl.log

for example:

C:\labkey\labkey\files\MyProject\MyFolder\@files\etlLogs\myetl_2015-07-06_15-04-27.etl.log

Attempted/completed jobs and log locations are recorded in the table dataIntegration.TransformRun. For details on this table, see

ETL: User Interface.

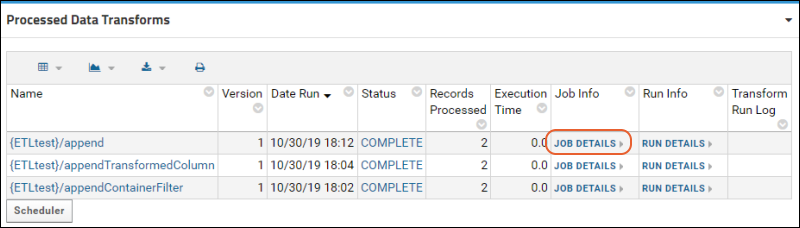

Log locations are also available from the

Data Transform Jobs web part (named

Processed Data Transforms by default). For the ETL job in question, click

Job Details.

File Path

File Path shows the log location.

ETL processes check for work (= new data in the source) before running a job. Log files are only created when there is work.

If, after checking for work, a job then runs, errors/exceptions throw a PipelineJobException. The UI shows only the error message; the log captures the stacktrace.

XSD/XML-related errors are written to the labkey.log file, located at <CATALINA_HOME>/logs/labkey.log.

DataIntegration Columns

To record a connection between a log entry and rows of data in the target table, add

the 'di' columns listed here to your target table.

Error Handling

If there were errors during the transform step of the ETL process, you will see the latest error in the

Transform Run Log column.

- An error on any transform step within a job aborts the entire job. “Success” in the log is only reported if all steps were successful with no error.

- If the number of steps in a given ETL process has changed since the first time it was run in a given environment, the log will contain a number of DEBUG messages of the form: “Wrong number of steps in existing protocol”. This is an informational message and does not indicate anything was wrong with the job.

- Filter Strategy errors. A “Data Truncation” error may mean that the xml filename is too long. Current limit is module name length + filename length - 1, must be <= 100 characters.

- Stored Procedure errors. “Print” statements in the procedure appear as DEBUG messages in the log. Procedures should return 0 on successful completion. A return code > 0 is an error and aborts job.

- Known issue: When the @filterRunId parameter is specified in a stored procedure, a default value must be set. Use NULL or -1 as the default.

Email Notifications

Administrators can configure users to be notified by email in case of an ETL job failure by setting up pipeline job notifications.

Learn more in this topic:

Related Topics

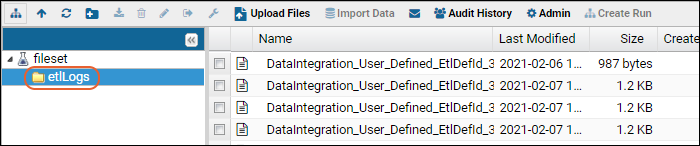

The absolute paths to these logs follows the pattern below. (ETLs defined in the user interface have an ETLNAME of "DataIntegration_User_Defined_EtlDefID_#"):

The absolute paths to these logs follows the pattern below. (ETLs defined in the user interface have an ETLNAME of "DataIntegration_User_Defined_EtlDefID_#"):  File Path shows the log location.

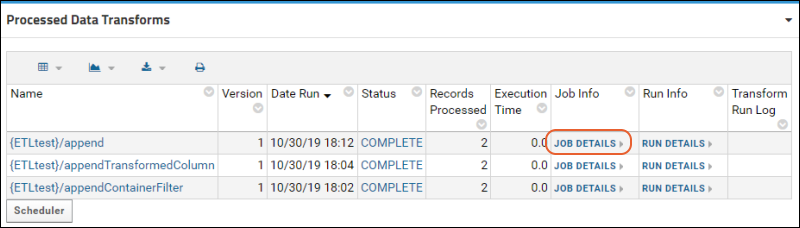

File Path shows the log location. ETL processes check for work (= new data in the source) before running a job. Log files are only created when there is work.

If, after checking for work, a job then runs, errors/exceptions throw a PipelineJobException. The UI shows only the error message; the log captures the stacktrace.XSD/XML-related errors are written to the labkey.log file, located at <CATALINA_HOME>/logs/labkey.log.

ETL processes check for work (= new data in the source) before running a job. Log files are only created when there is work.

If, after checking for work, a job then runs, errors/exceptions throw a PipelineJobException. The UI shows only the error message; the log captures the stacktrace.XSD/XML-related errors are written to the labkey.log file, located at <CATALINA_HOME>/logs/labkey.log.