Study

reload is used when you want to refresh study data, and is particularly useful when data is updated in another data source and brought into LabKey Server for analysis. Rather than updating each dataset individually, reloading a study in its entirety will streamline the process.

For example, if the database of record is outside LabKey Server, a script could automatically generate TSVs to be reloaded into the study every night. Study reload can simplify the process of managing data and ensure researchers see daily updates without forcing migration of existing storage or data collection frameworks.

Caution: Reloading a study will replace existing data with the data contained in the imported archive.

Manual Reloading

To manually reload a study, you need to have an unzipped

folder archive containing the study-related .xml files and directories and place it in the pipeline root directory. Learn more about folder archives and study objects in this topic:

Export a Study.

Export for Manual Reloading

- Navigate to the study you want to refresh.

- On the Manage tab, click Export Study.

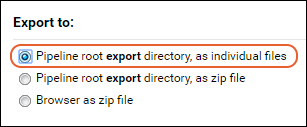

- Choose the objects to export and under Export to:, select "Pipeline root export directory, as individual files."

- The files will be exported unzipped to the correct location from which you can refresh.

Explore the downloaded archive format in the

export directory of your file browser. You can find the dataset and other study files in the subfolders. Locate the physical location of these files by determining the

pipeline root for the study. Make changes as needed.

Update Datasets

In the unzipped archive directory, you will find the exported datasets as TSV (tab-separated values) text files in the

datasets folder. They are named by ID number, so for example, a dataset named "Lab Results" that was originally imported from an Excel file, might be exported as "dataset5007.tsv". If you have a new Excel spreadsheet, "Lab_Results.xlsx", convert the data to a tab-separated format and replace the contents of dataset5007.tsv with the new data. Use caution that column headers and tab separation formats are maintained or the reload will fail.

Add New Datasets

If you are adding new datasets to the study:

- First ensure that each Excel and TSV data file includes the right subject and time identifying columns as the rest of your study.

- Place the new Excel or TSV files into the /export/study/datasets directory directly alongside any existing exported datasets.

- Delete the file "XX.dataset" (the XX in this filename will be the first word in the name of your folder) from the directory.

- Manually edit both the "datasets_manifest.xml" and "datasets_metadata.xml" files to add the details for your new dataset(s); otherwise the system will infer information for all datasets as described below.

- Reload the study.

Reload from Pipeline

- In your study, select > Go To Module > FileContent.

- You can also use the Manage tab, click Reload Study, and then click Use Pipeline.

- Locate the "folder.xml" file and select it.

- Click Import Data and confirm Import Folder is selected.

- Click Import.

- Select import options if desired.

- Click Start Import.

Study Creation via Reload

You can use the

manual reload mechanism to populate a new empty study by moving or creating the same folder archive structure in the pipeline root of another study folder. This mechanism avoids the manual process of creating numerous individual datasets.

To get started, export an existing study and copy the folders and structures to the new location you want to use. Edit individual files as needed to describe your study. When you reload the study following the same steps as above, it will create the new datasets and other structure from scratch. For a tutorial, use the topic:

Tutorial: Inferring Datasets from Excel and TSV Files.

Inferring New Datasets and Lists

Upon reloading, the server will create new datasets for any that don't exist, and infer column names and data types for both datasets and lists, according to the following rules.

- Datasets and lists can be provided as Excel files or as TSV files.

- The target study must already exist and have the same 'timepoint' style, either Date-based or Visit-based, as the incoming folder archive.

- If lists.xml or dataset_metadata.xml are present in the incoming folder archive, the server will use the column definitions therein to add columns if they are not already present.

- If lists.xml or dataset_metadata.xml are not present, the server will also infer new column names and column types based on the incoming data files.

- The datasets/lists must be located in the archive’s datasets and lists subdirectories. The relative path to these directories is set in the study.xml file. For example, the following study.xml file locates the datasets directory in /myDatasets and the lists directory in /myLists.

study.xml

<study xmlns="http://labkey.org/study/xml" label="Study Reload" timepointType="DATE" subjectNounSingular="Mouse" subjectNounPlural="Mice" subjectColumnName="MouseId" startDate="2008-01-01-08:00" securityType="ADVANCED_WRITE">

<cohorts type="AUTOMATIC" mode="SIMPLE" datasetId="5008" datasetProperty="Group"/>

<datasets dir="myDatasets" />

<lists dir="myLists" />

</study>

- When inferring new columns, column names are based on the first row of the file, and the column types are inferred from values in the first 5 rows of data.

- LabKey Server decides on the target dataset or list based on the name of the incoming file. For example, if a file named "DatasetA.xls" is present, the server will update an existing dataset “DatasetA”, or create a new dataset called "DatasetA". Using the same naming rules, the server will update (or add) Lists.

root

folder.xml

study

study.xml - A simplified metadata description of the study.

myDatasets

DatasetA.tsv

DatasetB.tsv

Demographics.xlsx

myLists

ListA.tsv

ListB.xlsx

Automated Study Reload: File Watchers (Premium Feature)

Premium Resource AvailableSubscribers to premium editions of LabKey Server can enable automated reload either of individual datasets from files or of the entire study from a folder archive.

Learn more in this topic:

Learn more about premium editions

Related Topics